ASTM F3263-17

(Guide)Standard Guide for Packaging Test Method Validation

Standard Guide for Packaging Test Method Validation

SIGNIFICANCE AND USE

4.1 Addressing consensus standards with inter-laboratory studies (ILS) and methods specific to an organization. Test methods need to be validated in many cases, in order to be able to rely on the results. This has to be done at the organization performing the tests but is also performed in the development of standards in inter-laboratory studies (ILS), which are not substitutes for the validation work to be performed at the organization performing the test.

4.1.1 Validations at the Testing Organization—Validations at the test performing organization include planning, executing, and analyzing the studies. Planning should include description of the scope of the test method which includes the description of the test equipment as well as the measurement range of samples it will be used for, rationales for the choice of samples, the amount of samples as well as rationales for the choice of methodology.

4.1.2 Objective of ILS Studies—ILS studies (per E691-14) are not focused on the development of test methods but rather with gathering the information needed for a test method precision statement after the development stage has been successfully completed. The data obtained in the interlaboratory study may indicate however, that further effort is needed to improve the test method. Precision in this case is defined as the repeatability and reproducibility of a test method, commonly known as gage R&R. For interlaboratory studies, repeatability deals with the variation associated within one appraiser operating a single test system at one facility whereas reproducibility is concerned with variation between labs each with their own unique test system. It is important to understand that if an ILS is conducted in this manner, reproducibility between appraisers and test systems in the same lab are not assessed.

4.1.3 Overview of the ILS Process—Essentially the ILS process consists of planning, executing, and analyzing studies that are meant to assess the precision of a tes...

SCOPE

1.1 This guide provides information to clarify the process of validating packaging test methods specific for an organization utilizing them as well as through inter-laboratory studies (ILS), addressing consensus standards with inter-laboratory studies (ILS) and methods specific to an organization.

1.1.1 ILS discussion will focus on writing and interpretation of test method precision statements and on alternative approaches to analyzing and stating the results.

1.2 This document provides guidance for defining and developing validations for both variable and attribute data applications.

1.3 This guide provides limited statistical guidance; however, this document does not purport to give concrete sample sizes for all packaging types and test methods. Emphasis is on statistical techniques effectively contained in reference documents already developed by ASTM and other organizations.

1.4 This standard does not purport to address all of the safety concerns, if any, associated with its use. It is the responsibility of the user of this standard to establish appropriate safety, health, and environmental practices and determine the applicability of regulatory limitations prior to use.

1.5 This international standard was developed in accordance with internationally recognized principles on standardization established in the Decision on Principles for the Development of International Standards, Guides and Recommendations issued by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

General Information

- Status

- Published

- Publication Date

- 14-Dec-2017

- Technical Committee

- F02 - Primary Barrier Packaging

- Drafting Committee

- F02.50 - Package Design and Development

Relations

- Effective Date

- 01-Nov-2023

- Effective Date

- 01-Oct-2023

- Effective Date

- 01-Apr-2022

- Effective Date

- 01-May-2020

- Effective Date

- 01-Oct-2018

- Effective Date

- 15-Aug-2018

- Effective Date

- 01-Oct-2017

- Effective Date

- 01-Oct-2017

- Effective Date

- 01-Jun-2017

- Effective Date

- 01-Oct-2014

- Effective Date

- 01-May-2014

- Effective Date

- 01-Apr-2014

- Effective Date

- 15-Nov-2013

- Effective Date

- 15-Nov-2013

- Effective Date

- 15-Nov-2013

Overview

ASTM F3263-17: Standard Guide for Packaging Test Method Validation provides a structured framework for validating packaging test methods, both within individual organizations and through inter-laboratory studies (ILS). The standard addresses the critical need for reliable, validated test methods in industries such as pharmaceuticals and medical devices, where packaging integrity is essential for product safety and regulatory compliance.

Test method validation ensures that results are accurate, repeatable, and reproducible, reducing risk and building trust in data derived from packaging tests. The guide outlines best practices in planning, executing, and analyzing validation studies, offering direction for both quantitative (variable) and qualitative (attribute) data applications.

Key Topics

- Intra-Organization Validation: Organizations must plan and perform their own test method validations. This includes defining the scope, test equipment, measurement ranges, sample plans, selection rationale, and methodologies. Validation at the organization level is essential to confirm suitability to specific processes and products.

- Inter-Laboratory Studies (ILS): ILS are conducted to evaluate method precision, focusing on repeatability (within-lab variation) and reproducibility (between-lab variation). While ILS support development of consensus standards and provide a precision statement for test methods, they do not replace in-house validation.

- Risk Assessment: A robust risk analysis, such as Failure Modes and Effects Analysis (FMEA), is recommended to determine required validation rigor. Different packaging tests (e.g., label adhesion versus sterile barrier integrity) carry varied criticality and risk profiles.

- Precision and Bias: The guide discusses the importance of generating meaningful precision statements using statistical approaches and provides guidance on interpreting data for both variable and attribute test methods.

- Validation for Variable and Attribute Data: The standard distinguishes between variable (quantitative) tests, which yield numerical results, and attribute (qualitative) tests, which yield pass/fail outcomes. It provides specific considerations and best practices for the validation of each data type.

Applications

The ASTM F3263-17 standard is broadly applicable across industries where packaging quality impacts product efficacy and safety, including:

- Medical Device and Pharmaceutical Sectors: Validation of test methods to meet regulatory requirements for packaging that maintains sterility and product integrity.

- Consumer Goods and Food Packaging: Ensuring packaging processes are robust, preventing contamination and extending shelf life.

- Research and Development: Supporting the development of new packaging solutions with validated test methods to demonstrate compliance and performance.

- Quality Assurance Programs: Building reliable QA frameworks by ensuring all test methods used for packaging assessment are fully validated and documented.

- Regulatory Submissions and Audits: Meeting ISO and ASTM requirements for validated test methods when presenting compliance evidence to notified bodies or regulatory authorities.

Related Standards

ASTM F3263-17 references and complements several key standards relevant to packaging test method validation and precision studies:

- ASTM E177 - Practice for Use of the Terms Precision and Bias in ASTM Test Methods

- ASTM E691 - Practice for Conducting an Interlaboratory Study to Determine the Precision of a Test Method

- ISO 11607-1 and ISO 11607-2 - Requirements and guidance for packaging for terminally sterilized medical devices

- ASTM E2282 - Guide for Defining the Test Result of a Test Method

- ASTM E2782 - Guide for Measurement Systems Analysis (MSA)

- FMEA Standards (e.g., SAE J1739, AIAG FMEA-3, MIL-STD-1629A) - Risk management tools complementing validation planning

By following the guidance set forth in ASTM F3263-17, organizations can enhance their packaging validation processes, ensuring that test methods deliver reliable, repeatable results that meet industry and regulatory expectations.

Buy Documents

ASTM F3263-17 - Standard Guide for Packaging Test Method Validation

Get Certified

Connect with accredited certification bodies for this standard

BRCGS (Brand Reputation Compliance Global Standards)

Global food safety and quality standards owner.

Sponsored listings

Frequently Asked Questions

ASTM F3263-17 is a guide published by ASTM International. Its full title is "Standard Guide for Packaging Test Method Validation". This standard covers: SIGNIFICANCE AND USE 4.1 Addressing consensus standards with inter-laboratory studies (ILS) and methods specific to an organization. Test methods need to be validated in many cases, in order to be able to rely on the results. This has to be done at the organization performing the tests but is also performed in the development of standards in inter-laboratory studies (ILS), which are not substitutes for the validation work to be performed at the organization performing the test. 4.1.1 Validations at the Testing Organization—Validations at the test performing organization include planning, executing, and analyzing the studies. Planning should include description of the scope of the test method which includes the description of the test equipment as well as the measurement range of samples it will be used for, rationales for the choice of samples, the amount of samples as well as rationales for the choice of methodology. 4.1.2 Objective of ILS Studies—ILS studies (per E691-14) are not focused on the development of test methods but rather with gathering the information needed for a test method precision statement after the development stage has been successfully completed. The data obtained in the interlaboratory study may indicate however, that further effort is needed to improve the test method. Precision in this case is defined as the repeatability and reproducibility of a test method, commonly known as gage R&R. For interlaboratory studies, repeatability deals with the variation associated within one appraiser operating a single test system at one facility whereas reproducibility is concerned with variation between labs each with their own unique test system. It is important to understand that if an ILS is conducted in this manner, reproducibility between appraisers and test systems in the same lab are not assessed. 4.1.3 Overview of the ILS Process—Essentially the ILS process consists of planning, executing, and analyzing studies that are meant to assess the precision of a tes... SCOPE 1.1 This guide provides information to clarify the process of validating packaging test methods specific for an organization utilizing them as well as through inter-laboratory studies (ILS), addressing consensus standards with inter-laboratory studies (ILS) and methods specific to an organization. 1.1.1 ILS discussion will focus on writing and interpretation of test method precision statements and on alternative approaches to analyzing and stating the results. 1.2 This document provides guidance for defining and developing validations for both variable and attribute data applications. 1.3 This guide provides limited statistical guidance; however, this document does not purport to give concrete sample sizes for all packaging types and test methods. Emphasis is on statistical techniques effectively contained in reference documents already developed by ASTM and other organizations. 1.4 This standard does not purport to address all of the safety concerns, if any, associated with its use. It is the responsibility of the user of this standard to establish appropriate safety, health, and environmental practices and determine the applicability of regulatory limitations prior to use. 1.5 This international standard was developed in accordance with internationally recognized principles on standardization established in the Decision on Principles for the Development of International Standards, Guides and Recommendations issued by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

SIGNIFICANCE AND USE 4.1 Addressing consensus standards with inter-laboratory studies (ILS) and methods specific to an organization. Test methods need to be validated in many cases, in order to be able to rely on the results. This has to be done at the organization performing the tests but is also performed in the development of standards in inter-laboratory studies (ILS), which are not substitutes for the validation work to be performed at the organization performing the test. 4.1.1 Validations at the Testing Organization—Validations at the test performing organization include planning, executing, and analyzing the studies. Planning should include description of the scope of the test method which includes the description of the test equipment as well as the measurement range of samples it will be used for, rationales for the choice of samples, the amount of samples as well as rationales for the choice of methodology. 4.1.2 Objective of ILS Studies—ILS studies (per E691-14) are not focused on the development of test methods but rather with gathering the information needed for a test method precision statement after the development stage has been successfully completed. The data obtained in the interlaboratory study may indicate however, that further effort is needed to improve the test method. Precision in this case is defined as the repeatability and reproducibility of a test method, commonly known as gage R&R. For interlaboratory studies, repeatability deals with the variation associated within one appraiser operating a single test system at one facility whereas reproducibility is concerned with variation between labs each with their own unique test system. It is important to understand that if an ILS is conducted in this manner, reproducibility between appraisers and test systems in the same lab are not assessed. 4.1.3 Overview of the ILS Process—Essentially the ILS process consists of planning, executing, and analyzing studies that are meant to assess the precision of a tes... SCOPE 1.1 This guide provides information to clarify the process of validating packaging test methods specific for an organization utilizing them as well as through inter-laboratory studies (ILS), addressing consensus standards with inter-laboratory studies (ILS) and methods specific to an organization. 1.1.1 ILS discussion will focus on writing and interpretation of test method precision statements and on alternative approaches to analyzing and stating the results. 1.2 This document provides guidance for defining and developing validations for both variable and attribute data applications. 1.3 This guide provides limited statistical guidance; however, this document does not purport to give concrete sample sizes for all packaging types and test methods. Emphasis is on statistical techniques effectively contained in reference documents already developed by ASTM and other organizations. 1.4 This standard does not purport to address all of the safety concerns, if any, associated with its use. It is the responsibility of the user of this standard to establish appropriate safety, health, and environmental practices and determine the applicability of regulatory limitations prior to use. 1.5 This international standard was developed in accordance with internationally recognized principles on standardization established in the Decision on Principles for the Development of International Standards, Guides and Recommendations issued by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

ASTM F3263-17 is classified under the following ICS (International Classification for Standards) categories: 55.020 - Packaging and distribution of goods in general. The ICS classification helps identify the subject area and facilitates finding related standards.

ASTM F3263-17 has the following relationships with other standards: It is inter standard links to ASTM E2282-23, ASTM F2097-23, ASTM E456-13a(2022)e1, ASTM F17-20, ASTM F17-18a, ASTM F17-18, ASTM E456-13A(2017)e3, ASTM E456-13A(2017)e1, ASTM F17-17, ASTM E2282-14, ASTM E177-14, ASTM F2097-14, ASTM E456-13a, ASTM E456-13ae3, ASTM E456-13ae2. Understanding these relationships helps ensure you are using the most current and applicable version of the standard.

ASTM F3263-17 is available in PDF format for immediate download after purchase. The document can be added to your cart and obtained through the secure checkout process. Digital delivery ensures instant access to the complete standard document.

Standards Content (Sample)

This international standard was developed in accordance with internationally recognized principles on standardization established in the Decision on Principles for the

Development of International Standards, Guides and Recommendations issued by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

Designation: F3263 − 17

Standard Guide for

Packaging Test Method Validation

This standard is issued under the fixed designation F3263; the number immediately following the designation indicates the year of

original adoption or, in the case of revision, the year of last revision.Anumber in parentheses indicates the year of last reapproval.A

superscript epsilon (´) indicates an editorial change since the last revision or reapproval.

INTRODUCTION

Thetestsoftenusedbyengineersinregulatedindustriessuchasmedicaldeviceorpharmaceuticals

are well known and referenced in both ASTM and ISO literature. However, questions around the

validation of these tests are not nearly as well understood. Questions that often arise are; how should

one validate these test methods? Should they be validated at all? To what degree should they be

validated?

OneanswertothisistheguidanceprovidedbyISO11607-1andISO11607-2whereitisstatedthat

“all test methods used to show compliance with this part of ISO 11607 shall be validated and

documented.”

Unfortunately, this does not answer all questions as little is provided in how to demonstrate

conformance to these requirements. This is due to the fact that there needs to be a great deal of

flexibilityinhowthesetestmethodsareused.Notallcircumstancesandtestmethodsrequirethesame

degree of scrutiny.Therefore, when assessing when, why, and how a test method should be validated,

it is critical to keep this flexibility in mind and use the best tools available to answer the above

questionsappropriatelyforagivensituation.Arobustriskassessmentprocessisarguablythebesttool

for determining the risk associated with a particular design element being tested. For example, there

are clear differences in the risk associated with testing the adhesion of a label versus testing the

integrity of a sterile barrier when viewed from the perspective of patient safety. If a label is missing,

theproductwouldbediscarded,andanewonethatisproperlylabeledchosen.However,ifthesterile

barrier has been compromised due to a seal breach or pinhole in the web of the material, this may go

undetected, a contaminated device may be used, and the patient may become infected.

The typical process for determining the level of risk associated with medical device packaging

components is the failure mode effects analysis tool, commonly referred to as an FMEA. The FMEA

process is intended to identify potential failure modes for a product or process, to assess the risk

associated with those failure modes, to rank the issues in terms of importance, and to identify and

document mitigation strategies that address the most serious concerns. There are many guides and

standards available that describe this process, such as SAE J1739, AIAG FMEA-3 and MIL-STD-

1629A. The present guide will be helpful in proposing ways to go about defining what approaches to

testmethodvalidationthatwillworkbestinagivenapplicationbasedontheassociatedrisk,andwill

also provide guidance on the execution of the validation.

1. Scope addressing consensus standards with inter-laboratory studies

(ILS) and methods specific to an organization.

1.1 Thisguideprovidesinformationtoclarifytheprocessof

1.1.1 ILSdiscussionwillfocusonwritingandinterpretation

validating packaging test methods specific for an organization

of test method precision statements and on alternative ap-

utilizingthemaswellasthroughinter-laboratorystudies(ILS),

proaches to analyzing and stating the results.

ThistestmethodisunderthejurisdictionofASTMCommitteeF02onPrimary

1.2 This document provides guidance for defining and

Barrier Packaging and is the direct responsibility of Subcommittee F02.50 on

developing validations for both variable and attribute data

Package Design and Development.

applications.

Current edition approved Dec. 15, 2017. Published March 2018. DOI: 10.1520/

F3263–17.

Copyright © ASTM International, 100 Barr Harbor Drive, PO Box C700, West Conshohocken, PA 19428-2959. United States

F3263 − 17

1.3 This guide provides limited statistical guidance; acceptance criteria. When a test method falls under this

however, this document does not purport to give concrete category another option may be no testing required.

sample sizes for all packaging types and test methods. Empha-

3.1.5 attribute test method, n—tests that return a pass/fail

sisisonstatisticaltechniqueseffectivelycontainedinreference

output measurement on a characteristic that is either conform-

documents already developed by ASTM and other organiza-

ing or nonconforming. Variable measurement data treated as

tions.

attribute also qualifies.

1.4 This standard does not purport to address all of the

3.1.6 acceptable quality level (AQL), n—represents a level

safety concerns, if any, associated with its use. It is the

of quality that a sampling plan routinely accepts. Lots at or

responsibility of the user of this standard to establish appro-

belowtheAQLareacceptedatleast95%ofthetime.TheAQL

priate safety, health, and environmental practices and deter-

may be determined from the sampling plan’s Operating Char-

mine the applicability of regulatory limitations prior to use.

acteristic (OC) Curve.

1.5 This international standard was developed in accor-

3.1.7 beta risk error (β), n—theprobabilitythataninspector

dance with internationally recognized principles on standard-

willacceptanonconformingunit.Alsoreferredtoasbetaerror

ization established in the Decision on Principles for the

(escaperate)ortypeIIerror.Forthepurposesofthisdocument

Development of International Standards, Guides and Recom-

this error type will be referred to as Beta risk error or (β).

mendations issued by the World Trade Organization Technical

Barriers to Trade (TBT) Committee. 3.1.8 borderline samples, n—marginally passing or failing

samples.

2. Referenced Documents

3.1.9 comparative test method, n—atestmethodthatisused

2.1 ASTM Standards:

for comparing the means of two or more populations using a

E177Practice for Use of the Terms Precision and Bias in

statistical test (e.g. 2-sample t test, ANOVA test). A compara-

ASTM Test Methods

tive test method is NOT used for accepting or rejecting

E456Terminology Relating to Quality and Statistics

individual units, and the output usually does NOT have

E691Practice for Conducting an Interlaboratory Study to

specification limits.

Determine the Precision of a Test Method

3.1.10 failure modes effects analysis, n—Failure modes and

E2282Guide for Defining the Test Result of a Test Method

effects analysis (FMEA) is a step-by-step approach for identi-

E2782Guide for Measurement Systems Analysis (MSA)

fying all possible failures in a design, a manufacturing or

F17Terminology Relating to Primary Barrier Packaging

assembly process, or a product or service.

F2097Guide for Design and Evaluation of Primary Flexible

3.1.11 highly instrumental method, n—a test method where

Packaging for Medical Products

the result is not dependent on the operator.

2.2 ISO Standards:

ISO 11607-1: 2006/A1: 2014 Packaging for terminally

3.1.12 lot tolerance percent defective (LTPD), n—in a

sterilized medical devices—Part 1: Requirements for

sampling plan, represents a level of quality that a sampling

materials, sterile barrier systems, and packaging,Amend-

plan routinely rejects. Lots at or above the LTPD are rejected

ment 1

at a probability level determined by the confidence level. The

ISO/TS 16775Packaging for terminally sterilized medical

LTPD may be determined from the sampling plan’s Operating

devices—GuidanceontheapplicationofISO11607-1and Characteristic (OC) Curve. Also known as the Rejectable

ISO 11607-2

Quality Level (RQL), Limiting Quality Level (LQ), and

Unacceptable Quality Level (UQL).

3. Terminology

3.1.13 measurement resolution, n—the smallest detectable

3.1 Definitions of Terms Specific to This Standard:

increment that can be measured by the test method.

3.1.1 accuracy, n—see E177.

3.1.14 precision, n—see E177.

3.1.2 alpha risk error (α), n—the probability that an inspec-

3.1.15 %P/T (precision to tolerance ratio), n—%P/T is a

tor will reject a conforming unit.Also referred to as producers

test method performance metric of a Gage R&R study. It

riskortypeIerror.Forthepurposesofthisdocumentthiserror

measures the percentage of the tolerance attributable to test

type will be referred to as Alpha risk error.

method variation. Depending on the component of test method

3.1.3 appraiser, n—term used to identify individual(s) that

variation being assessed, %P/T has three forms: %P/

will execute test method validation activities. May commonly

T , %P/T , and %P/T .

repeatability reproducibility total

also be referred to as appraisers or technicians.

3.1.16 operating characteristic (OC) curve, n—plot of pro-

3.1.4 as defined by team with rationale, n—the validation

cess or lot quality versus the probability of acceptance; the

team determines a performance level or sample size with

protection offered by a sampling plan shown graphically.

3.1.17 repeatability, n—see E177.

For referenced ASTM standards, visit the ASTM website, www.astm.org, or

3.1.18 reproducibility, n—see E177.

contact ASTM Customer Service at service@astm.org. For Annual Book of ASTM

Standards volume information, refer to the standard’s Document Summary page on

the ASTM website.

Available fromAmerican National Standards Institute (ANSI), 25 W. 43rd St.,

4th Floor, New York, NY 10036, http://www.ansi.org. http://asq.org/learn-about-quality/process-analysis-tools/overview/fmea.html

F3263 − 17

3.1.19 %R&R (reproducibility and repeatability), methodsneedtobevalidatedinmanycases,inordertobeable

n—%R&RisatestmethodperformancemetricofaGageR&R to rely on the results. This has to be done at the organization

study. It measures the percentage of the historical process

performing the tests but is also performed in the development

variation attributed to test method variation. Calculating

of standards in inter-laboratory studies (ILS), which are not

%R&R requires a known historical standard deviation.

substitutes for the validation work to be performed at the

organization performing the test.

3.1.20 self-evident, n—an inspection that meets both of the

following criteria: (1) The nonconformance is discrete in

4.1.1 Validations at the Testing Organization—Validations

nature, meaning there cannot be a transition region between

atthetestperformingorganizationincludeplanning,executing,

conformingandnon-conformingproductwhichhasatleastthe

and analyzing the studies. Planning should include description

potential for misclassification. (2) Little or no training is

of the scope of the test method which includes the description

required to discriminate between conforming and non-

of the test equipment as well as the measurement range of

conforming product.

samplesitwillbeusedfor,rationalesforthechoiceofsamples,

3.1.21 % study variation (%SV), n—a test method perfor-

the amount of samples as well as rationales for the choice of

mancemetricofaGageR&Rstudy.Itmeasuresthepercentage methodology.

ofthetotalvariationofaGageR&Rstudyattributedtothetest

4.1.2 Objective of ILS Studies—ILS studies (per E691-14)

method variation.

are not focused on the development of test methods but rather

3.1.22 subject matter expert (SME), n—subject matter ex-

with gathering the information needed for a test method

pert on the product and/or process. Engineers, inspector,

precision statement after the development stage has been

technicians, trainers and production supervisors who have a

successfully completed. The data obtained in the interlabora-

strong understanding of the failure modes may be considered

torystudymayindicatehowever,thatfurthereffortisneededto

SMEs.

improvethetestmethod.Precisioninthiscaseisdefinedasthe

repeatability and reproducibility of a test method, commonly

3.1.23 test method, n—see ASTM E2282.

known as gage R&R. For interlaboratory studies, repeatability

3.1.24 test systems, n—instrument and associated materials

deals with the variation associated within one appraiser oper-

required to perform the test.

atingasingletestsystematonefacilitywhereasreproducibility

3.1.25 trial, n—a trial is defined as one inspector or a piece

is concerned with variation between labs each with their own

of equipment in the case of a highly instrumental method

unique test system. It is important to understand that if an ILS

making one measurement or pass/fail decision. If three inspec-

isconductedinthismanner,reproducibilitybetweenappraisers

tors each evaluate the same device once, it counts as three

and test systems in the same lab are not assessed.

trials. Similarly, if one inspector evaluates the same device

4.1.3 Overview of the ILS Process—Essentially the ILS

twice during the test, it counts as two trials.

process consists of planning, executing, and analyzing studies

3.1.26 user defined with minimum sample size restrictions,

that are meant to assess the precision of a test method. The

n—the validation team selects the performance level, but the

stepsrequiredtodothisfromanASTMperspectiveare;create

test shall satisfy minimum requirements for the proportion of

a task group, identify an ILS coordinator, create the experi-

conforming and nonconforming trials or for measurement

mental design, execute the testing, analyze the results, and

collected to calculate variability.

document the resulting precision statement in the test method.

3.1.27 validation team, n—the responsible party for the test

For more detail on how to conduct an ILS refer to E691-14.

method validation that seeks cross-functional input, validates

4.1.4 Writing Precision and Bias Statements—Whenwriting

the effectiveness of the test method, and completes corrective

Precision and Bias Statements for an ASTM standard, the

actions associated with any test failures.

minimum expectation is that the Standard Practice outlined in

3.1.28 variable test method, n—a test method that produces

E177-14 will be followed. However, in some cases it may also

numerical results with reference to a continuous scale.

be useful to present the information in a form that is more

3.1.29 visual aid, n—visual media used for training pur-

easilyunderstoodbytheuserofthestandard.Examplescanbe

poses or to illustrate manufacturing process steps.

found in 4.1.5 below.

3.2 Acronyms: 4.1.5 Alternative Approaches to Analyzing and Stating

3.2.1 AQL—Acceptable Quality Level

Results—Variable Data:

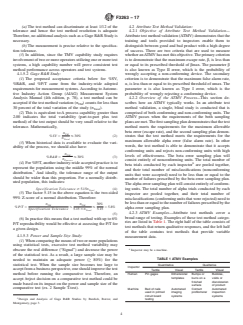

3.2.2 ATMV—Attribute Test Method Validation 4.1.5.1 Capability Study:

(1)Aprocesscapabilitygreaterthan2.00indicatesthetotal

3.2.3 DV—Design Verification

variability (part-to-part plus test method) of the test output

3.2.4 FMEA—Failure Modes and Effects Analysis

should be very small relative to the tolerance. Mathematically,

3.2.5 LTPD—Lot Tolerance Percent Defective

Specifiction Tolerance

Pp 5 $2.00

3.2.6 MVR—Master Validation Record

6σ

Total

(1)

$σ # Specification Tolerance

4. Significance and Use

Total

(2)Notice, σ in the above equation includes σ and

4.1 Addressing consensus standards with inter-laboratory

Total Part

studies (ILS) and methods specific to an organization. Test σ . Therefore, two conclusions can be made:

TM

F3263 − 17

(a)The test method can discriminate at least 1/12 of the 4.2 Attribute Test Method Validation:

tolerance and hence the test method resolution is adequate 4.2.1 Objective of Attribute Test Method Validation—

Therefore,noadditionalanalysissuchasaGageR&RStudyis Attributetestmethodvalidation(ATMV)demonstratesthatthe

necessary. training and tools provided to inspectors enable them to

(b)The measurement is precise relative to the specifica- distinguish between good and bad product with a high degree

tion tolerance. of success. There are two criteria that are used to measure

(3)In addition, since the TMV capability study requires whetheranATMVhasmetthisobjective.Theprimarycriterion

involvementoftwoormoreoperatorsutilizingoneormoretest is to demonstrate that the maximum escape rate,β, is less than

systems, a high capability number will prove consistent test or equal to its prescribed threshold of βmax. The parameter β

method performance across operators and test systems. is also known as Type II error, which is the probability of

4.1.5.2 Gage R&R Study: wrongly accepting a non-conforming device. The secondary

(1)The proposed acceptance criteria below for %SV, criterion is to demonstrate that the maximum false alarm rate,

%R&R, and %P/T came from the industry-wide adopted α,islessthanorequaltoitsprescribedthresholdofαmax.The

requirementsformeasurementsystems.AccordingtoAutomo- parameter α is also known as Type I error, which is the

tive Industry Action Group (AIAG) Measurement System probability of wrongly rejecting a conforming device.

Analysis Manual (4th edition, p. 78), a test method can be 4.2.2 Overview of the ATMV Process—This section de-

acceptedifthetestmethodvariation(σ )countsforlessthan scribes how an ATMV typically works. In an attribute test

TM

30 percent of the total variation of the study (σ ). method validation, a single, blind study is conducted that is

Total

(2)This is equivalent to:A process capability greater than comprised of both conforming and non-conforming units. The

2.00 indicates the total variability (part-to-part plus test ATMV passes when the requirements of the both sampling

method) of the test output should be very small relative to the plansaremet.Thefirstsamplingplandemonstratesthatthetest

tolerance. Mathematically, method meets the requirements for the maximum allowable

beta error (escape rate), and the second sampling plan demon-

σ

TM

%SV 5 #30% (2)

strates that the test method meets the requirements for the

σ

Total

maximum allowable alpha error (false alarm rate). In other

(3)When historical data is available to evaluate the vari-

words, the test method is able to demonstrate that it accepts

ability of the process, we should also have:

conforming units and rejects non-conforming units with high

σ

TM

levels of effectiveness. The beta error sampling plan will

%R&R 5 #30% (3)

σ

Process

consist entirely of nonconforming units. The total number of

(4)For%P/T,anotherindustry-wideacceptedpracticeisto

beta trials conducted by each inspector are pooled together,

represent the population using the middle 99% of the normal

and their total number of misclassifications (nonconforming

distribution. And ideally, the tolerance range of the output

units that were accepted) need to be less than or equal to the

should be wider than this proportion. For a normally distrib-

number of failures prescribed by the beta error sampling plan.

uted population, this indicates:

Thealphaerrorsamplingplanwillconsistentirelyofconform-

Specification Tolerance$5.15σ (4) ing units. The total number of alpha trials conducted by each

Total

(5)The factor 5.15 in the above equation is the two-sided

inspector are pooled together, and their total number of

99% Z-score of a normal distribution. Therefore:

misclassifications(conformingunitsthatwererejected)needto

belessthanorequaltothenumberoffailuresprescribedbythe

σ σ 30%

TM TM

%P⁄T 5 # # 55.8%

alpha error sampling plan.

Specification Tolerance 5.15 3σ 5.15

Total

4.2.3 ATMV Examples—Attribute test methods cover a

(5)

broad range of testing. Examples of these test method catego-

(6)In practice this means that a test method with up to 6%

ries are listed in Table 1.The right half of the table consists of

P/T reproducibility would be effective at assessing the P/T for

test methods that return qualitative responses, and the left half

a given design.

of the table contains test methods that provide variable

4.1.5.3 Power and Sample Size Study:

measurement data.

(1)Whencomparingthemeansoftwoormorepopulations

using statistical tests, excessive test method variability may

Inspector may be a machine.

obscure the real difference (“Signal”) and decrease the power

of the statistical test. As a result, a large sample size may be

TABLE 1 ATMV Examples

needed to maintain an adequate power (≥ 80%) for the

statistical test. When the sample size becomes too large to

Quantitative Qualitative

Inspector

acceptfromabusinessperspective,oneshouldimprovethetest Tactile Visual Tactile Visual

method before running the comparative test. Therefore, an

Human Pin gages Dimensional Bumps or Bubbles,

templates burrs on a voids or

accept /reject decision on a comparative test method could be

finished discoloration

made based on its impact on the power and sample size of the

surface of product

comparative test (ex. 2 Sample T-test).

Machine Bed of nails Automated Contact Automated

used in printed imaging profilometer inspection

circuit board systems systems

Design and Analysis of Gage R&R Studies by Burdick, Borror, and testing

Montgomery, page 3.

F3263 − 17

4.2.4 ATMV for Variable Measurement Data—It is a good (5)Component is cracked – Cracks vary in length and

practice to analyze variable test methods as variable measure- severity, and inspectors vary in their ability to see visual

defects. Therefore, this is neither a discrete outcome nor an

ment data whenever possible. However, there are instances

where measurement data is more effectively treated as quali- easy to train instruction.

4.2.6 ATMV Steps:

tative data. Example: A Sterile Barrier System (SBS) for

medical devices with a required seal strength specification of 4.2.6.1 Step1–Prepare the test method documentation:

(1)Make sure equipment qualifications have been com-

1.0-1.5lb./in.istobevalidated.Atensiletesteristobeusedto

measure the seal strength, but it only has a resolution of 0.01 pleted or are at least in the validation plan to be completed

prior to executing the ATMV.

lbs.Asaresult,thePpkcalculationstypicallyfail,eventhough

(2)Examples of equipment settings to be captured in the

there is very rarely a seal that is out of specification in

test method documentation include environmental or ambient

production. The validation team determines that the data will

conditions, magnification level on microscopes, lighting and

need to be treated as attribute, and therefore, anATMVwill be

feed rate on automatic inspection systems, pressure on a

required rather than a variable test method validation.

vacuum decay test and lighting standards in a cleanroom,

4.2.5 Self-evident Inspections—This section illustrates the

which might involve taking lux readings in the room to

requirements of a self-evident inspection called out in the

characterize the light level.

definitionsabove.Tobeconsideredaself-evidentinspection,a

(3)Work with training personnel to create pictures of the

defectisbothdiscreteinnatureandrequireslittleornotraining

defects. It may be beneficial to also include pictures of good

to detect. The defect cannot satisfy just one or the other

product and less extreme examples of the defect, since the

requirement.

spectrum of examples will provide better resolution for deci-

4.2.5.1 The following may be considered self-evident in-

sion making.

spections:

(4)Where possible, the visual design standards should be

(1)Sensor light illuminates when lubricity level on a wire

shown at the same magnification level as will be used during

is correct and otherwise does not light up when lubrication is

inspection.

insufficient – Since the test equipment is creating a binary

(5)Make sure that theATMV is run using the most recent

output for the inspector and the instructions are simple, this

visual design standards and that they are good representations

qualifies as self-evident. However, note that a test method

of the potential defects.

validation involving the equipment needs to be validated.

4.2.6.2 Step 2 – Establish acceptance criteria:

(2)Component is present in the assembly – If the presence

(1)Identify which defects need to be included in the test.

of the component is reasonably easy to detect, this qualifies as

(2)Use scrap history to identify the frequency of each

self-evident since the outcome is binary.

defect code or type. This could also be information that is

(3)The correct component is used in the assembly – As

simply provided by the SME.

long as the components are distinct from one another, this

(3)Do not try to squeeze too many defects into a single

qualifies as self-evident since the outcome is binary.

inspection step. As more defects are added to an inspection

4.2.5.2 The following would generally not be considered

process,inspectorswilleventuallyreachapointwheretheyare

self-evident inspections:

unable to check for everything, and this threshold may also

(1)Burn or heat discoloration – Unless the component

show itself in the ATMV testing. Limits will vary by the type

completely changes color when overheated, this inspection is

of product and test method, but for visual inspection, 15-20

going to require the inspector to detect traces of discoloration,

defects may be the maximum number that is attainable.

which fails to satisfy the discrete conditions requirement.

4.2.6.3 Step 3 – Determine the required performance level

(2)Improper forming of S-bend or Z-bend – The compo-

of each defect:

nent is placed on top of a template, and the inspector verifies

(1)If the ATMV testing precedes completion of a risk

that the component is entirely within the boundaries of the

analysis, the suggested approach is to use a worse-case

template. The bend can vary from perfectly shaped to com-

outcome or high risk designation. This needs to be weighed

pletely out of the boundaries in multiple locations with every

against the increase in sample size associated with the more

level of bend in-between. Therefore, this is not a discrete

conservative rating.

outcome.

(2)Failuremodesthatdonothaveanassociatedriskindex

(3)No nicks on the surface of the component –Anick can

maybetestedtowhateverrequirementsareagreeduponbythe

vary in size from “not visible under magnification” to “not

validation team. If a component or assembly can be scrapped

visible to the unaided eye” to “plainly visible to the unaided

for a particular failure mode, good business sense is to make

eye”. Therefore, this is not a discrete outcome. sure that the inspection is effective by conducting an ATMV.

(4)No burrs on the surface of a component – Inspectors

(3)Pin gages are an example of a variable output that is

vary in the sensitivity of their touch due to callouses on their sometimes treated as attribute data due to poor resolution

fingers, and burrs vary in their degree of sharpness and combined with tight specification limits. In this application,

exposure. Therefore, this is neither a discrete condition nor an inspectorsaretrainedpriortothetestingtounderstandthelevel

easy to train instruction. of friction that is acceptable versus unacceptable.

F3263 − 17

(4)Incoming inspection is another example of where (2)Would a diagram be more effective than an actual

variable data is often treated as attribute. Treating variable picture of the defect?

measurementsaspass/failoutcomescanallowforlesscomplex (c)Reviewborderlinesamples.Consideraddingpictures/

measurement tools such as templates and require less training diagramsofborderlinesamplestothevisualstandards.Insome

cases there may be a difference between functional and

for inspectors. However, these benefits should be weighed

against the additional samples that may be required and the cosmetic defects. This may vary by method/package type.

(d)Somevalidationteamshaveperformeddryruntesting

degree of information lost. For instance, attribute data would

say that samples centered between the specification limits are tocharacterizethecurrenteffectivenessoftheinspection.Note

that the same samples should not be used for dry run testing

no different than samples just inside of the specification limits.

and final testing if the same inspectors are involved in both

This could result in greater downstream costs and more

tests.

difficult troubleshooting for yield improvements.

4.2.6.8 Step 8 – Select a representative group of inspectors

4.2.6.4 Step 4 – Determine acceptance criteria:

as the test group:

(1)Refer to your company’s predefined confidence and

(1)There will be situations, such as site transfer, where all

reliability requirements; or

of the inspectors have about the same level of familiarity with

(2)Refer to the chart example in Appendix X1.

the product. If this is the case, select the test group of

4.2.6.5 Step5–Create the validation plan:

inspectors based on other sources of variability within the

(1)Determine the proportion of each defect in the sample.

inspectors,suchastheirproductionshift,skillleveloryearsof

(a)While some sort of rationale should be provided for

experience with similar product inspection.

how the defect proportions are distributed in theATMV, there

(2)The inspectors selected for testing should at least have

is some flexibility in choosing the proportions. Therefore,

familiarity with the product, or this becomes an overly conser-

differentstrategiesmaybeemployedfordifferentproductsand

vative test. For example, a lack of experience with the product

processes,forexample10defectivepartsin30or20defectsin

may result in an increase in false positives.

30.The cost of the samples along with the risk associated with

(3)Document that a varied group of inspectors were

incorrect outcomes affects decision making.

selected for testing.

(b)Scrap production data will often not be available for

4.2.6.9 Step9–Prepare the Test Samples:

new products. In these instances, use historical scrap from a

(1)Collect representative units.

similar product or estimate the expected scrap proportions

(a)BepreparedforATMVtestingbycollectingrepresen-

based on process challenges that were observed during devel-

tative defect devices early and often in the development

opment.Anotheroptionistorepresentallofthedefectsevenly.

process.Borderlinesamplesareparticularlyvaluabletocollect

4.2.6.6 Step 6 – Determine the number of inspectors and

at this time. However, be aware that a sample that cannot even

devices needed:

be agreed upon as good or bad by the subject matter experts is

(1)When the number of trials is large, consider employing

only going to cause problems in the testing. Instead, choose

more than three inspectors to reduce the number of unique

samples that are representative of “just passing” and “just

parts required for the test. More inspectors can inspect the

failing” relative to the acceptance criteria.

same parts without adding more parts to achieve additional

(2)Use the best judgment as to whether the man-made

trials and greater statistical power.

defect samples adequately represent defects that naturally

(2)Inspectors are not required to all look at the same

occur during the sealing process, distribution simulation, or

samples, although this is probably the simplest approach.

other manufacturing processes, for example. If a defect cannot

(3)For semi-automated inspection systems that are sensi-

be adequately replicated and/or the occurrence rate is too low

tive to fixture placement or setup by the inspector, multiple

to provide a sample for the testing, this may be a situation

inspectors should still be employed for the test.

where the defect type can be omitted with rationale from the

(4)For automated inspection systems that are completely

testing.

inspector independent, only one inspector is needed. However,

(3)Estimate from a master plan how many defects will be

inordertoreducethenumberofuniquepartsneeded,consider

necessary for testing, and try to obtain 1.5 times the estimated

controlling other sources of variation such as various lighting

number of samples required for testing. This will allow for

conditions, temperature, humidity, inspection time, day/night

weeding out broken samples and less desirable samples.

shift, and part orientations.

(4)Traceabilityofsamplesmaynotbenecessary.Theonly

4.2.6.7 Step7–Prepare the Inspectors: requirement on samples is that they accurately depict confor-

(1)Train the inspectors prior to testing: mance or the intended nonconformance. However, capturing

(a)Explain the purpose and importance of ATMV to the traceability information may be helpful for investigational

inspectors.

purposes if there is difficulty validating the method or if it is

(b)Inspector training should be a two-way process. The desirable to track outputs to specific non-conformities.

validation team should seek feedback from the inspectors on (5)There should preferably be more than one SME to

the quality and clarity of visual standards, pictures and written confirmthestatusofeachsampleinthetest.Keepinmindthat

descriptions in the inspection documentation. a trainer or production supervisor might also be SMEs on the

(1)Are there any gray areas that need clarification? process defect types.

F3263 − 17

(6)Select a storage method appropriate for the particular (2)Consider using Excel, Minitab, or an online random

sample. Potential options include tackle boxes with separate number generator to create the run order for the test.

(3)Draw numbers from a container until the container is

labeledcompartments,plasticresealablebagsandplasticvials.

Refer to your standardized test method for pre-conditioning empty and record the order.

(i)Somecompaniesapplytimelimitstoeachsampleora

requirements.

total time limit for the test so that the testing is more

(7)Writing a secret code number on each part successfully

representativeofthefast-pacedrequirementsoftheproduction

concealsthetypeofdefect,butitisNOTaneffectivemeansof

environment. If used, this should be noted in the protocol.

concealing the identity of the part. In other words, if an

4.2.6.11 Step 11 – Execute the protocol:

inspector is able to remember the identification number of a

(1)Be sure to comply with the pre-conditioning require-

sample and the defect they detected on that sample, then the

ments during protocol execution.

test has been compromised the second time the inspector is

(2)Avoid situations where the inspector is hurrying to

given that sample. If each sample is viewed only once by each

complete the testing. Estimate how long each inspector will

inspector,thenplacingthecodenumberonthesampleisnotan

take and plan ahead to start each test with enough time in the

issue.

shift for the inspector to complete their section, or communi-

(8)Video testing is another option for some manual visual

cate that the inspector will be allowed to go for lunch or break

inspections, especially if the defect has the potential to change

during the test.

over time, such as a crack or foreign material.

(3)Explain to the inspector which inspection steps are

(9)If the product is extremely long/large, such as a

being tested. Clarify whether there may be more than one

guidewire, guide catheter, pouch, tray, container closure sys-

defectpersample.However,notethatmorethanonedefecton

tem (jar & lid), and the defects of interest are only in a

a sample can create confusion during the testing.

particularsegmentoftheproduct,onecanchoosetodetachthe

(4)Ifthefirstpersonfailstocorrectlyidentifythepresence

pertinent segment from the rest of the sample. If extenuating

or absence of a defect, it is a business/team decision on

factors such as length or delicacy is an element in making the

whethertocontinuetheprotocolwiththeremaininginspectors.

fullproductchallengingtoinspect,thenthefullproductshould

Completing the protocol will help characterize whether the

be used. Example: leak test where liquid in the package that

issuesarewidespread,whichcouldhelpavoidfailingagainthe

could impact the test result.

next time. On the other hand, aborting the ATMV right away

(10)Take pictures or videos of samples with defects and

could save considerable time for everyone.

store in a key for future reference.

(5)It is not good practice to change the sampling plan

4.2.6.10 Step 10 – Develop the protocol:

during the test if a failure occurs. For instance, if the original

(1)Suggested protocol sections

beta error sampling plan was n=45, a=0, and a failure occurs,

(a)Purpose and scope.

updating the sampling plan to an n = 76, a=1 during the test is

(b)Reference to the test method document being vali-

incorrectsincethesamplingplanbeingperformedisactuallya

dated.

double sampling plan with n1=45, a1=0, r1=2, n2=31, a1=1.

(c)A list of references to other related documents, if

This results in an LTPD = 5.8%, rather than the 5.0% LTPD in

applicable.

the original plan.

(d)Alistofthetypesofequipment,instruments,fixtures,

(6)Be prepared with replacement samples in reserve if a

etc. used for the TMV.

defect sample becomes damaged.

(e)TMV study rationale, including:

(7)Running the test concurrently with all of the test

(1)Statistical method used for TMV;

inspectors is risky, since the administrator will be responsible

(2)Characteristics measured by the test method and the

for keeping track of which inspector has each unlabeled

measurement range covered by the TMV;

sample.

(3)Description of the test samples and the rationale;

(8)Review misclassified samples after each inspector to

(4)Numberofsamples,numberofoperators,andnumber

determine whether the inspector might have detected a defect

of trials;

that the prep team missed.

(5)Data analysis method, including any historical statis-

4.2.6.12 Step 12 – Analyze the test results:

tics that will be used for the data analysis (for example, the

(1)Scrapping for the wrong defect code or defect type:

historical average for calculating %P/T with a one-sided

(a)Therewillbeinstanceswhereaninspectordescribesa

specification limit).

defect with a word that wasn’t included in the protocol. The

(f)TMV acceptance criteria.

validation team needs to determine whether the word used is

(g)Validation test procedures (for example, sample

synonymous with any of the listed names for this particular

preparation, test environment setup, test order, data collection

defect.Ifnot,thenthetrialfails.Ifthewordmatchesthedefect,

method, etc.).

then note the exception in the deviations section of the report.

(h)Methods of randomization

(2)Excluding data from calculations of performance:

(1)Therearemultiplewaystorandomizetheorderofthe

samples. In all cases, store the randomized order in another

For some types of testing instrument performance may be verified during

column, then repeat and append the second randomized list to

execution of the validation. Performing this action would not be considered

the first stored list for each sample that is being inspected a

modifyingthesamplesize.Examples:highvoltageleakdetectionorvacuumdecay.

second time by the same inspector.

F3263 − 17

(a)If a defect is discovered after the test is complete, (3)As much as possible, the same samples should not be

there are two suggested options. First, the inspector may be usedforthesubsequentATMVifthesameinspectorsarebeing

tested on a replacement part later if necessary.Alternatively, if tested that were in the previous ATMV.

(4)Interview any inspectors who committed classifica-

theresultsoftheindividualtrialwillnotalterthefinalresultof

tion errors to understand if their errors were due to a misun-

thesamplingplan,thenthereplacementtrialscanbebypassed.

derstanding of the acceptance criteria or simply a miss.

This rationale should be documented in the deviations section

(5)To improve the proficiency of defect detection/test

of the report.

methodology the following are some suggested best practices:

(1)As an example, consider an alpha sampling plan of n

(a)Define an order of inspection in the work instruction

=160,a=13 that is designed to meet a 12% alpha error rate.

for the inspectors, such as moving from proximal end to distal

After all inspectors had completed the test, it was determined

end or doing inside then outside.

thatoneoftheconformingsampleshadadefect,andfiveofthe

(b)When inspecting multiple locations on a component

six trials on this sample identified this defect, while one of the

or assembly for specific attributes, provide a visual template

sixcalledthisaconformingsample.Theresultsofthesixtrials

with ordered numbers to follow during the inspection.

need to be scratched, but do they need to be repeated? If the

(c)Transfer the microscope picture to a video screen for

remaining 154 conforming trials have few enough failures to

easier viewing.

still meet the required alpha error rate of 12%, then no

(d)If there are too many defect types to look for at one

replacementtrialsarenecessary.Thesamerationalewouldalso

inspection step, some may get missed. Move any inspections

apply to a defective sample in a beta error sampling plan.

not associated with the process back upstream to the process

(2)If a vacuum decay test sample should have failed the

that would have created the defect.

leaktest,inthatcaseaspartoftheprotocoltheprocessmaybe

(6)When an inspector has misunderstood the criteria, the

to send the sample back to the company that created the

needistobetterdifferentiategoodandnonconformingproduct.

defective sample for confirmation that it is indeed still defec-

Here are some ideas:

tive. If found to no longer be representative of the desired

(a)Review the visual standard of the defect with the

defect type, then the sample would be excluded from the

inspectors and trainers.

calculations.

(b)Determine whether a diagram might be more infor-

mative than a photo.

4.2.6.13 Step 13 – Complete the validation report:

(c)Change the magnification level on the microscope.

(1)When the validation test passes:

(d)If an ATMV is failing because borderline defects are

(a)IftheATMVwasdifficulttopassoritrequiresspecial

being wrongly accepted, slide the manufacturing acceptance

inspectortraining,consideraddinganappraiserproficiencytest

criteria threshold to a more conservative level. This will

to limit those who are eligible for the process inspection.

potentially increase the alpha error rate, which typically has a

(2)When the validation test fails:

higher error rate allowance anyway, but the beta error rate

(a)Repeating the validation

should decrease.

(1)There is no restriction on how many times anATMV

(7)Considerusinganattributeagreementanalysistohelp

can fail. However, some common sense should be applied, as

identify the root cause of theATMVfailure as it is a good tool

a high number of attempts appear to be a test-until-you-pass

to assess the agreement of nominal or ordinal ratings given by

approach and could become an audit flag. Therefore, a good

multipleappraisers.Theanalysiswillcalculateboththerepeat-

rule of thumb is to perform a dry run or feasibility assessment

ability of each individual appraiser and the reproducibility

prior to execution to optimize appraiser training and test

between appraisers, similar to a variable gage R&R.

methodologyinordertoreducetheriskoffailingtheprotocol.

4.2.6.14 Step 14 – Post-Validation Activities:

If an ATMV fails, members of the validation team could take

(1)Test Method Changes

the test themselves. If the validation team passes, then some-

(a)Ifrequirements,standards,ortestmethodschange,the

thing isn’t being communicated clearly to the inspectors, and

impact of the other two factors needs to be assessed.

additional interviews are needed to identify the confusion. If

(b)As an example, many attribute test methods such as

the validation team also fails the ATMV, this is a strong

visualinspectionhavenoimpactontheform,fitorfunctionof

indication that the visual inspection or attribute test method is

the device being tested. Therefore, it is easy to overlook that

not ready for release.

changes to the test method criteria documented in design

(b)User Error

prints,visualdesignstandards,visualprocessstandardsneedto

(1)Examples of ATMV test error include:

becloselyevaluatedforwhatimpactthechangemighthaveon

(a)Microscope set at the wrong magnification.

the performance of the device.

(b)Sample traceability compromised during the ATMV

(c)A good practice is to bring together representatives

due to a sample mix-up.

fromoperationsanddesigntoreviewtheproposedchangeand

(2)Atest failure demonstrates that the variability among

consider potential outcomes of the change.

inspectors needs to be reduced. The key is to understand why

(d)For example, changes to the initial visual inspection

thetestfailed,correcttheissueanddocumentrationale,sothat

standards that were used during design verification builds may

subsequent tests do not appear to be a test-until-you-pass

not identify defects prior to going through the process of

approach. distribution simulation. Stresses that were missed during this

...

Questions, Comments and Discussion

Ask us and Technical Secretary will try to provide an answer. You can facilitate discussion about the standard in here.

Loading comments...