ISO/IEC 14496-20:2006

(Main)Information technology — Coding of audio-visual objects — Part 20: Lightweight Application Scene Representation (LASeR) and Simple Aggregation Format (SAF)

Information technology — Coding of audio-visual objects — Part 20: Lightweight Application Scene Representation (LASeR) and Simple Aggregation Format (SAF)

ISO/IEC 14496-20:2006 defines a scene description format (LASeR) and an aggregation format (SAF) suitable for representing and delivering rich-media services to resource-constrained devices such as mobile phones. A rich media service is a dynamic, interactive collection of multimedia data such as audio, video, graphics, and text. Services range from movies enriched with vector graphic overlays and interactivity (possibly enhanced with closed captions) to complex multi-step services with fluid interaction and different media types at each step. LASeR aims at fulfilling all the requirements of rich-media services at the scene description level. LASeR supports: an optimized set of objects inherited from SVG to describe rich-media scenes; a small set of key compatible extensions over SVG; the ability to encode and transmit a LASeR stream and then reconstruct SVG content; dynamic updating of the scene to achieve a reactive, smooth and continuous service; simple yet efficient compression to improve delivery and parsing times, as well as storage size, one of the design goals being to allow both for a direct implementation of the SDL as documented, as well as for a decoder compliant with ISO/IEC 23001-1 to decode the LASeR bitstream; an efficient interface with audio and visual streams with frame-accurate synchronization; use of any font format, including the OpenType industry standard; and easy conversion from other popular rich-media formats in order to leverage existing content and developer communities.

Technologies de l'information — Codage des objets audiovisuels — Partie 20: Représentation de scène d'application allégée (LASeR) et format d'agrégation simple (SAF)

General Information

- Status

- Withdrawn

- Publication Date

- 14-Jun-2006

- Withdrawal Date

- 14-Jun-2006

- Current Stage

- 9599 - Withdrawal of International Standard

- Start Date

- 20-Nov-2008

- Completion Date

- 12-Feb-2026

Relations

- Effective Date

- 06-Jun-2022

- Effective Date

- 06-Jun-2022

- Effective Date

- 28-Aug-2008

- Effective Date

- 28-Aug-2008

- Effective Date

- 15-Apr-2008

- Effective Date

- 15-Apr-2008

Get Certified

Connect with accredited certification bodies for this standard

BSI Group

BSI (British Standards Institution) is the business standards company that helps organizations make excellence a habit.

NYCE

Mexican standards and certification body.

Sponsored listings

Frequently Asked Questions

ISO/IEC 14496-20:2006 is a standard published by the International Organization for Standardization (ISO). Its full title is "Information technology — Coding of audio-visual objects — Part 20: Lightweight Application Scene Representation (LASeR) and Simple Aggregation Format (SAF)". This standard covers: ISO/IEC 14496-20:2006 defines a scene description format (LASeR) and an aggregation format (SAF) suitable for representing and delivering rich-media services to resource-constrained devices such as mobile phones. A rich media service is a dynamic, interactive collection of multimedia data such as audio, video, graphics, and text. Services range from movies enriched with vector graphic overlays and interactivity (possibly enhanced with closed captions) to complex multi-step services with fluid interaction and different media types at each step. LASeR aims at fulfilling all the requirements of rich-media services at the scene description level. LASeR supports: an optimized set of objects inherited from SVG to describe rich-media scenes; a small set of key compatible extensions over SVG; the ability to encode and transmit a LASeR stream and then reconstruct SVG content; dynamic updating of the scene to achieve a reactive, smooth and continuous service; simple yet efficient compression to improve delivery and parsing times, as well as storage size, one of the design goals being to allow both for a direct implementation of the SDL as documented, as well as for a decoder compliant with ISO/IEC 23001-1 to decode the LASeR bitstream; an efficient interface with audio and visual streams with frame-accurate synchronization; use of any font format, including the OpenType industry standard; and easy conversion from other popular rich-media formats in order to leverage existing content and developer communities.

ISO/IEC 14496-20:2006 defines a scene description format (LASeR) and an aggregation format (SAF) suitable for representing and delivering rich-media services to resource-constrained devices such as mobile phones. A rich media service is a dynamic, interactive collection of multimedia data such as audio, video, graphics, and text. Services range from movies enriched with vector graphic overlays and interactivity (possibly enhanced with closed captions) to complex multi-step services with fluid interaction and different media types at each step. LASeR aims at fulfilling all the requirements of rich-media services at the scene description level. LASeR supports: an optimized set of objects inherited from SVG to describe rich-media scenes; a small set of key compatible extensions over SVG; the ability to encode and transmit a LASeR stream and then reconstruct SVG content; dynamic updating of the scene to achieve a reactive, smooth and continuous service; simple yet efficient compression to improve delivery and parsing times, as well as storage size, one of the design goals being to allow both for a direct implementation of the SDL as documented, as well as for a decoder compliant with ISO/IEC 23001-1 to decode the LASeR bitstream; an efficient interface with audio and visual streams with frame-accurate synchronization; use of any font format, including the OpenType industry standard; and easy conversion from other popular rich-media formats in order to leverage existing content and developer communities.

ISO/IEC 14496-20:2006 is classified under the following ICS (International Classification for Standards) categories: 35.040 - Information coding; 35.040.40 - Coding of audio, video, multimedia and hypermedia information. The ICS classification helps identify the subject area and facilitates finding related standards.

ISO/IEC 14496-20:2006 has the following relationships with other standards: It is inter standard links to ISO/IEC 14496-20:2006/Amd 1:2008, ISO/IEC 14496-20:2006/FDAM 2, ISO/IEC 14496-20:2008/Amd 1:2009, ISO/IEC 14496-20:2008; is excused to ISO/IEC 14496-20:2006/Amd 1:2008, ISO/IEC 14496-20:2006/FDAM 2. Understanding these relationships helps ensure you are using the most current and applicable version of the standard.

ISO/IEC 14496-20:2006 is available in PDF format for immediate download after purchase. The document can be added to your cart and obtained through the secure checkout process. Digital delivery ensures instant access to the complete standard document.

Standards Content (Sample)

INTERNATIONAL ISO/IEC

STANDARD 14496-20

First edition

2006-06-15

Information technology — Coding

of audio-visual objects —

Part 20:

Lightweight Application Scene

Representation (LASeR) and Simple

Aggregation Format (SAF)

Technologies de l'information — Codage des objets audiovisuels —

Partie 20: Représentation de scène d'application allégée (LASeR) et

format d'agrégation simple (SAF)

Reference number

©

ISO/IEC 2006

ISO/IEC 14496-20:2005(E)

PDF disclaimer

This PDF file may contain embedded typefaces. In accordance with Adobe's licensing policy, this file may be printed or viewed but

shall not be edited unless the typefaces which are embedded are licensed to and installed on the computer performing the editing. In

downloading this file, parties accept therein the responsibility of not infringing Adobe's licensing policy. The ISO Central Secretariat

accepts no liability in this area.

Adobe is a trademark of Adobe Systems Incorporated.

Details of the software products used to create this PDF file can be found in the General Info relative to the file; the PDF-creation

parameters were optimized for printing. Every care has been taken to ensure that the file is suitable for use by ISO member bodies. In

the unlikely event that a problem relating to it is found, please inform the Central Secretariat at the address given below.

© ISO/IEC 2006

All rights reserved. Unless otherwise specified, no part of this publication may be reproduced or utilized in any form or by any means,

electronic or mechanical, including photocopying and microfilm, without permission in writing from either ISO at the address below or

ISO's member body in the country of the requester.

ISO copyright office

Case postale 56 • CH-1211 Geneva 20

Tel. + 41 22 749 01 11

Fax + 41 22 749 09 47

E-mail copyright@iso.org

Web www.iso.org

Published in Switzerland

ii © ISO/IEC 2006 – All rights reserved

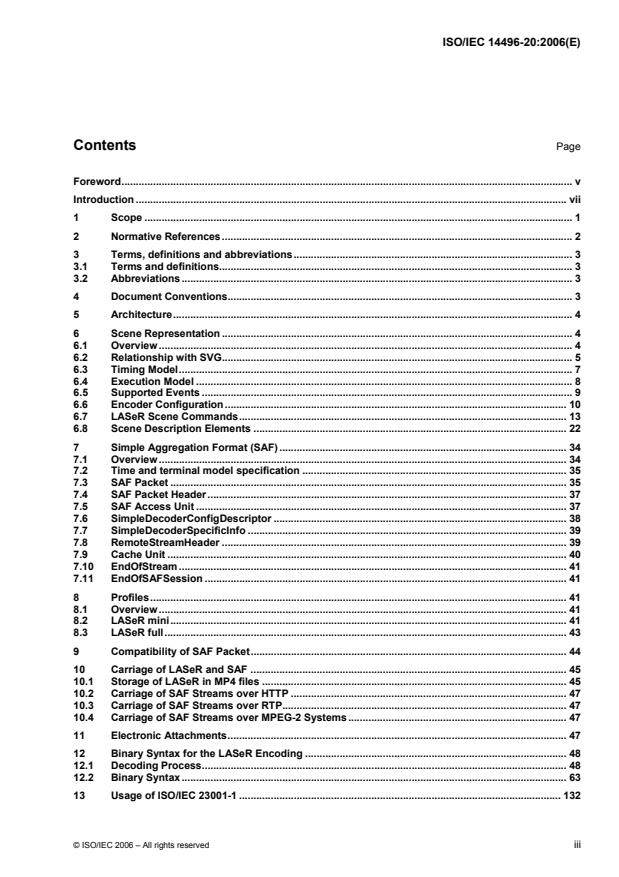

Contents Page

Foreword. v

Introduction . vii

1 Scope. 1

2 Normative References. 2

3 Terms, definitions and abbreviations. 3

3.1 Terms and definitions. 3

3.2 Abbreviations . 3

4 Document Conventions. 3

5 Architecture. 4

6 Scene Representation. 4

6.1 Overview. 4

6.2 Relationship with SVG. 5

6.3 Timing Model. 7

6.4 Execution Model . 8

6.5 Supported Events . 9

6.6 Encoder Configuration. 10

6.7 LASeR Scene Commands. 13

6.8 Scene Description Elements. 22

7 Simple Aggregation Format (SAF). 34

7.1 Overview. 34

7.2 Time and terminal model specification . 35

7.3 SAF Packet. 35

7.4 SAF Packet Header. 37

7.5 SAF Access Unit. 37

7.6 SimpleDecoderConfigDescriptor . 38

7.7 SimpleDecoderSpecificInfo. 39

7.8 RemoteStreamHeader. 39

7.9 Cache Unit. 40

7.10 EndOfStream. 41

7.11 EndOfSAFSession. 41

8 Profiles. 41

8.1 Overview. 41

8.2 LASeR mini. 41

8.3 LASeR full. 43

9 Compatibility of SAF Packet. 44

10 Carriage of LASeR and SAF . 45

10.1 Storage of LASeR in MP4 files . 45

10.2 Carriage of SAF Streams over HTTP . 47

10.3 Carriage of SAF Streams over RTP. 47

10.4 Carriage of SAF Streams over MPEG-2 Systems. 47

11 Electronic Attachments. 47

12 Binary Syntax for the LASeR Encoding . 48

12.1 Decoding Process. 48

12.2 Binary Syntax. 63

13 Usage of ISO/IEC 23001-1 . 132

© ISO/IEC 2006 – All rights reserved iii

13.1 Introduction . 132

13.2 Electronic Attachments. 132

13.3 Type Codecs. 132

13.4 Type codecs for use with ISO/IEC 23001-1 decoders . 134

13.5 DecoderInit. 137

13.6 Decoding Process. 137

Annex A (informative) Patent statements . 141

Bibliography . 142

iv © ISO/IEC 2006 – All rights reserved

Foreword

ISO (the International Organization for Standardization) and IEC (the International Electrotechnical

Commission) form the specialized system for worldwide standardization. National bodies that are members of

ISO or IEC participate in the development of International Standards through technical committees

established by the respective organization to deal with particular fields of technical activity. ISO and IEC

technical committees collaborate in fields of mutual interest. Other international organizations, governmental

and non-governmental, in liaison with ISO and IEC, also take part in the work. In the field of information

technology, ISO and IEC have established a joint technical committee, ISO/IEC JTC 1.

International Standards are drafted in accordance with the rules given in the ISO/IEC Directives, Part 2.

The main task of the joint technical committee is to prepare International Standards. Draft International

Standards adopted by the joint technical committee are circulated to national bodies for voting. Publication as

an International Standard requires approval by at least 75 % of the national bodies casting a vote.

ISO/IEC 14496-20 was prepared by Joint Technical Committee ISO/IEC JTC 1, Information technology,

Subcommittee SC 29, Coding of audio, picture, multimedia and hypermedia information.

ISO/IEC 14496 consists of the following parts, under the general title Information technology — Coding of

audio-visual objects:

⎯ Part 1: Systems

⎯ Part 2: Visual

⎯ Part 3: Audio

⎯ Part 4: Conformance testing

⎯ Part 5: Reference software

⎯ Part 6: Delivery Multimedia Integration Framework (DMIF)

⎯ Part 7: Optimized reference software for coding of audio-visual objects [Technical Report]

⎯ Part 8: Carriage of ISO/IEC 14496 contents over IP networks

⎯ Part 9: Reference hardware description [Technical Report]

⎯ Part 10: Advanced Video Coding

⎯ Part 11: Scene description and application engine

⎯ Part 12: ISO base media file format

⎯ Part 13: Intellectual Property Management and Protection (IPMP) extensions

⎯ Part 14: MP4 file format

⎯ Part 15: Advanced Video Coding (AVC) file format

⎯ Part 16: Animation Framework eXtension (AFX)

© ISO/IEC 2006 – All rights reserved v

⎯ Part 17: Streaming text format

⎯ Part 18: Font compression and streaming

⎯ Part 19: Synthesized texture stream

⎯ Part 20: Lightweight Application Scene Representation (LASeR) and Simple Aggregation Format (SAF)

⎯ Part 21: MPEG-J GFX

⎯ Part 22: Open Font Format

vi © ISO/IEC 2006 – All rights reserved

Introduction

ISO/IEC 14496-20 specifies syntax and semantics for:

— The Lightweight Application Scene Representation (LASeR), specified in Clause 6, which is a binary

format for encoding 2D scenes and updates of scenes. The binary format and the scene representation

(based on SVG Tiny), are both designed to be suitable for lightweight embedded devices such as mobile

phones.

— A Simple Aggregation Format (SAF), specified in Clause 7, to efficiently and easily transport LASeR data

together with audio and/or video content over various delivery channels. This multiplexing scheme is

designed to be simple to implement and to allow efficient demultiplexing on low-end devices.

The International Organization for Standardization (ISO) and International Electrotechnical Commission (IEC)

draw attention to the fact that it is claimed that compliance with this document may involve the use of a patent.

The ISO and IEC take no position concerning the evidence, validity and scope of this patent right.

The holder of this patent right has assured the ISO and IEC that he is willing to negotiate licences under

reasonable and non-discriminatory terms and conditions with applicants throughout the world. In this respect,

the statement of the holder of this patent right is registered with the ISO and IEC. Information may be obtained

from the companies listed in Annex A.

Attention is drawn to the possibility that some of the elements of this document may be the subject of patent

rights other than those identified in Annex A. ISO and IEC shall not be held responsible for identifying any or

all such patent rights.

© ISO/IEC 2006 – All rights reserved vii

INTERNATIONAL STANDARD ISO/IEC 14496-20:2006(E)

Information technology — Coding of audio-visual objects —

Part 20:

Lightweight Application Scene Representation (LASeR)

and Simple Aggregation Format (SAF)

1 Scope

This International Standard defines a scene description format (LASeR) and an aggregation format (SAF)

respectively suitable for representing and delivering rich-media services to resource-constrained devices such

as mobile phones.

LASeR aims at fulfilling all the requirements of rich-media services at the scene description level. LASeR

supports:

⎯ an optimized set of objects inherited from SVG to describe rich-media scenes;

⎯ a small set of key compatible extensions over SVG;

⎯ the ability to encode and transmit a LASeR stream and then reconstruct SVG content;

⎯ dynamic updating of the scene to achieve a reactive, smooth and continuous service;

⎯ simple yet efficient compression to improve delivery and parsing times, as well as storage size, one of the

design goals being to allow both for a direct implementation of the SDL as documented, as well as for a

decoder compliant with ISO/IEC 23001-1 to decode the LASeR bitstream;

⎯ an efficient interface with audio and visual streams with frame-accurate synchronization;

⎯ use of any font format, including the OpenType industry standard; and

⎯ easy conversion from other popular rich-media formats in order to leverage existing content and

developer communities.

Technology selection criteria for LASeR included compression efficiency, but also code and memory footprint

and performance. Other aims included: scalability, adaptability to the user context, extensibility of the format,

ability to define small profiles, feasibility of a J2ME implementation, error resilience and safety of

implementations.

SAF aims at fulfilling all the requirements of rich-media services at the interface between media/scene

description and existing transport protocols:

⎯ simple aggregation of any type of stream;

⎯ signaling of MPEG and non-MPEG streams;

⎯ optimized packet headers for bandwidth-limited networks;

⎯ easy mapping to popular streaming formats;

⎯ cache management capability; and

⎯ extensibility.

© ISO/IEC 2006 – All rights reserved 1

SAF has been designed to complement LASeR for simple, interactive services, bringing:

⎯ efficient and dynamic packaging to cope with high latency networks;

⎯ media interleaving; and

⎯ synchronization support with a very low overhead.

This International Standard defines the usage of SAF for LASeR content. However, LASeR can be used

independently from SAF.

2 Normative References

The following referenced documents are indispensable for the application of this document. For dated

references, only the edition cited applies. For undated references, the latest edition of the referenced

document (including any amendments) applies.

ISO/IEC 13818-1, Information technology — Generic coding of moving pictures and associated audio

information — Part 1:Systems

ISO/IEC 14496-1, Information technology — Coding of audio-visual objects — Part 1: Systems

ISO/IEC 14496-12, Information technology — Coding of audio-visual objects — Part 12: ISO base media file

format

ISO/IEC 14496-18, Information technology — Coding of audio-visual objects — Part 18: Font compression

and streaming

RFC 2045, Multipurpose Internet Mail Extensions (MIME) Part one: Format of Internet message bodies,

http://www.ietf.org/rfc/rfc2045.txt

RFC 2326, Real Time Streaming Protocol, http://www.ietf.org/rfc/rfc2326.txt

RFC 2965, HTTP State Management Mechanism, http://www.ietf.org/rfc/rfc2965.txt

W3C SVG11, Scalable Vector Graphics (SVG) 1.1 Specification [Recommendation],

http://www.w3.org/TR/2003/REC-SVG11-20030114/

W3C SMIL2, Synchronized Multimedia Integration Language (SMIL 2.0) — [Second Edition], 07 January 2005.

http://www.w3.org/TR/2005/REC-SMIL2-20050107/

W3C CSS, Cascading Style Sheets, level 2 [Recommendation],

http://www.w3.org/TR/1998/REC-CSS2-19980512/

W3C DOM, Document Object Model Level 2 Events Specification, Version 1.0, W3C Recommendation

13 November, 2000. http://www.w3.org/TR/2000/REC-DOM-Level-2-Events-20001113

W3C, XML, Events, an Events Syntax for XML, W3C Recommandation 14 October 2003.

http://www.w3.org/TR/2003/REC-xml-events-20031014

W3C xml:id Version 1.0, W3C Recommendation 12 July 2005,

http://www.w3.org/TR/2005/PR-xml-id-20050712/

W3C Xlink, XML Linking Language, W3C Recommendation, 27 June 2001.

http://www.w3.org/TR/2001/REC-xlink-20010627/

2 © ISO/IEC 2006 – All rights reserved

3 Terms, definitions and abbreviations

3.1 Terms and definitions

For the purposes of this document, the following terms and definitions apply.

3.1.1

access unit

individually accessible portion of data within a media stream

NOTE An access unit is the smallest data entity to which timing information can be attributed.

3.1.2

media time line

axis on which times are expressed within the transport or system carrying a LASeR or other stream

3.1.3

normal play time

indicates the stream absolute position relative to the beginning of the presentation

[RFC 2326]

3.1.4

packet

smallest data entity managed by SAF consisting of a header and a payload

3.1.5

scene segment

a set of access units of a LASeR stream, where only the first access unit contains a LASeRHeader

3.1.6

scene time line

axis on which times are expressed within the SVG/LASeR scene, e.g. begin and end

3.2 Abbreviations

CSS Cascading Style Sheets, a W3C standard

SMIL Synchronized Multimedia Integration Language, a W3C standard

SVG Scalable Vector Graphics, a W3C standard

4 Document Conventions

This document uses the following styling conventions for various types of information.

Any name of element, attribute, descriptor or command defined in this specification is styled in bold italic, such

as Add. Any name of element, attribute, descriptor or command defined in another specification is prefixed

with the name of that specification, such as SVG animate or SMIL video.

XML examples use the following style:

version="1.1" baseProfile="tiny">

…

© ISO/IEC 2006 – All rights reserved 3

SDL descriptions of binary syntax use the following style:

Insert extends LASeRUpdate {

const bit(UpdateBits) InsertCode;

uint(idBits) ref;

The following is the style used for ECMA Script:

function Insert(parentId, field, value) {…

5 Architecture

LaSER is defined in terms of abstract access units, which may be adapted for transmission over a variety of

protocols. LaSER streams may be packaged with some or all of their related media into files of the ISO base

media file format family (e.g. MP4) and delivered over reliable protocols. There is also a simple aggregation

format (SAF), which aggregates a LaSER stream with some or all of its associated media into stream

order. SAF may be delivered over reliable or unreliable protocols. Finally, LASeR streams could be adapted

to other delivery protocols such as RTP [RFC 2326] or MPEG-2 transport [ISO/IEC 13818-1]; however, the

definitions of these mappings is outside the scope of this specification.

Figure 1 presents the LASeR and SAF architecture.

Application

LASeR

SVG Scene Tree

Extensions

Audio Video Image Font …

LASeR

Commands

Binary Encoding

SAF

Transport

Network

Figure 1 — Architecture of LASeR and SAF

6 Scene Representation

6.1 Overview

In this document, a multimedia presentation is a collection of a scene description and media (zero, one or

more). A media is an individual audiovisual content of the following type: image (still picture), video (moving

pictures), audio and by extension, font data. A scene description is constituted of text, graphics, animation,

interactivity and spatial, audio and temporal layout.

A scene description specifies four aspects of a presentation:

⎯ how the scene elements (media or graphics) are organised spatially, e.g. the spatial layout of the

visual elements;

4 © ISO/IEC 2006 – All rights reserved

⎯ how the scene elements (media or graphics) are organised temporally, i.e. if and how they are

synchronised, when they start or end;

⎯ how to interact with the elements in the scene (media or graphics), e.g. when a user clicks on an

image;

⎯ and if the scene is changing, how the scene changes happen.

A scene description may change by means of animations. The different states of the scene during the whole

animation may be deterministic (i.e. known when the animation starts) or not. The former case is illustrated by

parametric animations. The latter case is illustrated by, for instance, a server sending modification to the

scene on the fly. The sequence of a scene description and its timed modifications is called a scene description

stream.

The scene description format specified herein is called LASeR. A scene description stream is called a LASeR

Stream. Modifications to the scenes are called LASeR Commands. A command is used to act on elements

or attributes of the scene at a given instant in time. LASeR Commands that need to be executed at the same

time are grouped into one LASeR Access Unit (AU).

This specification defines an XML language to describe scenes which can be encoded with the LASeR format

defined throughout subclauses 6.5 to 6.8.37. The exact XML syntax for these elements and attributes is

described in the schemas provided as electronic attachments to this specification.

This specification also defines a binary format to efficiently represent 2D scene descriptions.

6.2 Relationship with SVG

6.2.1 Scene tree

The scene constructs on which the binary format defined in this specification is based are the elements

defined by the W3C in the SVG specification [W3C SVG11] [2]. Subclause 6.8 explicitly refers to the SVG or

SMIL elements and attributes which can be encoded using the binary format defined in this specification. A

LASeR scene is an SVG scene possibly with LASeR extensions. These extensions are also defined in this

subclause. This specification defines in subclause 6.6.2.3 a set of commands, called LASeR Commands,

which can be applied to a LASeR scene.

6.2.2 Fonts

LASeR supports the encoding of fonts. Fonts shall be encoded separately from the scene, e.g. using

ISO/IEC 14496-18, and sent as a media stream together with the scene stream. SVG elements related to font

description are not supported by LASeR.

NOTE 1 to encode SVG scenes with SVG fonts in LASeR, font information shall be extracted from the SVG scene,

encoded separately and sent as a media stream. ISO/IEC 14496-18 is one option to encode and transmit the font, and

more options may be specified in the future.

NOTE 2 when using LASeR to encode an SVG scene which includes SVG Fonts derived from OpenType fonts, a

better quality can be achieved by transmitting the original OpenType fonts.

NOTE 3 care should be taken when extracting font information from an SVG scene that the effective target of

references into the SVG scene, e.g. from scripts, is not changed. One possible way is to replace the extracted font

element with a suitable supported (possibly empty) element.

© ISO/IEC 2006 – All rights reserved 5

baseProfile="tiny">

d="M1306 412Q1200 412 1123 443T999 ."/>

d="M1250 -30Q1158 -30 1090 206Q1064 ."/>

d="M1011 892L665 144Q537 -129 469 ."/>

d="M802 -61Q520 -61 324 108Q116 ."/>

d="M770 -196Q770 -320 710 -382T528 ."/>

font-size="60" fill="black" stroke="none">

stroke="#888888"/>

AyÖ@ç

Example 1 — SVG scene with embedded font information

baseProfile="tiny">

this was a font

font-size="60" fill="black" stroke="none">

stroke="#888888"/>

AyÖ@ç

Example 2 — LASeR/SAF equivalent of Example 1

(the remainder of this subclause is informative)

Differences between example 1 and 2 are:

⎯ the SVG scene has been wrapped in a NewScene update, then in a SAF layer.

6 © ISO/IEC 2006 – All rights reserved

⎯ the font description is removed from the SVG scene, encoded with ISO/IEC 14496-18 and placed in

a SAF mediaUnit. The attributes streamType=”12” and objectTypeIndication=”6” in the SAF

mediaHeader with streamID “font” identify the content of the SAF stream.

⎯ the SAF mediaHeader and SAF mediaUnit are connected through the streamID “font”, which is

encoded as a number, and is strictly local to SAF.

⎯ connection between font-family=”TestComic” and the font encoded in the SAF mediaUnit happens

through the font name which is part of the OpenType encoding.

6.3 Timing Model

There are Scene Times, Wallclock Times, SMPTE timecodes, Media Times, and Encoded Scene Times.

Wallclock times and SMPTE timecodes are not affected by the following discussion.

Logically, a LASeR scene at any instant could be represented by an XML document, which appears like an

SVG document:

...

...

Times within this logical XML document are uniformly expressed in scene times. Scene times have a zero

origin and the timescale is defined in SVG.

Logically XML fragments are sent in access units which have Media Time timestamps (MT). These may not

have a known origin, and are expressed on a timescale declared at the transport layer. Note that the

equations below do not show the correction for timescale units, for simplicity.

The XML fragment containing the "svg" element in this example is sent in an access unit which is a

NewScene. The media timestamp MT(ns) of that access unit is arbitrary, but the defined SceneTime of it is

zero; ST(ns) = 0.

The XML fragment which supplies the construct "r" is sent in a later access unit with media timestamp

MT(r). The defined scene time of that access unit is ST(r) = MT(r) - MT(ns).

Scene times within that access unit ("begin" in this example) are encoded for transmission relative to the

scenetime of the access unit. In this example, the "begin" time X is transmitted as the Encoded Scene Time

X-ST(r). For LASeR commands inside a script element, ST(r) is the scene time when the script is activated.

A RefreshScene command has an arbitrary media time, as usual, but contains within the access unit the

defined SceneTime for that media time. This enables terminals which "tune in" after the NewScene was sent,

or for any other reason did not receive the NewScene, to nonetheless establish Scene Times. The encoder

could calculate the value of that scenetime by comparing the media timestamp of the RefreshScene MT(rs)

with the media timestamp of the preceding NewScene MT(ns), and sending MT(rs) - MT(ns) .

When a scene segment starts with a NewScene, the scene time is reset to 0. In such a scene segment, the

scene time of a LASeR access unit is defined as the difference between the media time of that access unit

and the media time of the closest previous NewScene.

When a scene segment does not start with a NewScene, the scene time is not reset to 0 and let T be the

s0

scene time within the initial scene segment upon reception of the first access unit of that new scene segment.

In such a scene segment, the scene time of a LASeR access unit is defined as the difference between the

media time of that access unit and the media time of the first access unit of that scene segment incremented

by T . Note: the determination of T will vary if there is any variation in delivery times between terminals.

s0 s0

© ISO/IEC 2006 – All rights reserved 7

Within a scene segment

x

NewScene

sceneTime(x) = mediaTime(x) – mediaTime(NewScene)

With more scene segments

x

NewScene T

s0

First scene segment

y z

Second scene segment

sceneTime(y) = T

s0

sceneTime(z) = T + mediaTime(z) – mediaTime(y)

s0

Figure 2 — scene time and scene segments

Time values are encoded in ticks. The number of ticks per seconds for time values relating to the scene time

line is defined by the timeResolution attribute of the LASeRHeader. Attributes “begin” and “end” are encoded

as offset from the scene time of the current access unit. Attributes “clipBegin” and “clipEnd”, which hold times

in a media time line of another stream, are encoded with a predefined resolution of 1000 ticks per seconds.

6.4 Execution Model

An application which shows a presentation comprising a LASeR stream in a way compliant with this

specification is called a LASeR Engine.

The playback algorithm of a compliant LASeR Engine shall produce the same result as the algorithm

described below with the following high-level steps for each execution cycle:

1. Compute the new scene time Ts (begin of execution cycle);

2. Decode any LASeR AU with a scene time below or equal to Ts, and not yet presented in earlier execution

cycles;

3. Execute LASeR Commands from LASeR AUs decoded at step 2;

4. Process all events (DOM, SVG or LASeR) according to the DOM event model [3] and resolve all begin

and end times that can be resolved according to the SMIL Timing Model, in clause 10 of [SMIL2];

5. Determine active media objects by inspecting begin and end times,

6. For each active media object, present the media access unit with the normal play time equal to clipBegin

+ (Ts – begin time) and clamp it using clipEnd.

7. Render the audio and visual element of the scene tree according to the SVG rendering model as

described in Clause 3 of [W3C SVG11] (end of execution cycle).

8 © ISO/IEC 2006 – All rights reserved

LASeR

Decoded

Scene Scene Rendered

LASeR Scene LASeR

Access

Stream Tree Scene

decoder Tree Renderer

Units

Manager

Normative in LASeR Normative in SVG

Figure 3 — LASeR engine components and normative parts

6.5 Supported Events

A LASeR engine supports the event model as specified in the DOM Level2 events specification [W3C DOM]0

with extensions compatible with the (informative) DOM Level 3 specification [3] and SVG Tiny 1.2 [2]. These

extensions are: the definition of a namespace associated with each event; the naming of the events and of the

animation events; and the notion of cancellability of an event.

The list of supported events with their properties is given in Table 1.

Table 1 — List of supported events

Event name Namespace Description Bubble Canc.

“focusin” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes No

(or deprecated “DOMFocusIn”) events SVG11].

“focusout” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes No

(or deprecated events SVG11].

“DOMFocusOut”)

“activate” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes Yes

events SVG11].

“click” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes Yes

events SVG11].

“mousedown” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes Yes

events SVG11].

“mouseup” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes Yes

events SVG11].

“mouseover” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes Yes

events SVG11].

“mouseout” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes Yes

events SVG11].

“mousemove” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes No

events SVG11].

“load” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C No No

(or deprecated “SVGLoad”) events SVG11].

“resize” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes No

(or deprecated “SVGResize”) events SVG11].

“scroll” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes No

(or deprecated “SVGScroll”) events SVG11].

“zoom” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes No

(or deprecated “SVGZoom”) events SVG11].

“beginEvent” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes ???

events SVG11].

“endEvent” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes ???

events SVG11].

“repeatEvent” http://www.w3.org/2001/xml- As defined in subclause 16.2 of [W3C Yes ???

events SVG11].

“keyup” http://www.w3.org/2001/xml- reserved for future use

No No

events

“keydown” http://www.w3.org/2001/xml- reserved for future use

No No

events

“textInput" http://www.w3.org/2001/xml- reserved for future use

No No

events

© ISO/IEC 2006 – All rights reserved 9

“accessKey(keyCode)” urn:mpeg:mpeg4:laser:2005 The key keyCode has been pressed, as

No No

defined

in subclause 6.4 of [SMIL2]

“longAccessKey(keyCode)” urn:mpeg:mpeg4:laser:2005 Similar to accessKey but for the fact that

No No

the event

is only triggered if the key has been

pressed for

a longer time, the definition of “longer”

being left

to the appreciation of the browser

implementation.

“pause” urn:mpeg:mpeg4:laser:2005 Freezes the clock of the timed object they

No No

are

sent to, and have no effect on non timed

objects.

“resume” urn:mpeg:mpeg4:laser:2005 Restarts the clock of the timed object they

No No

are

sent to, and have no effect on non timed

objects.

The value of keyCode in Table 1 is defined in Table 2

Table 2 — Defined key codes

Key Name Key Code Comment

KEY_UP 0

KEY_DOWN 1

KEY_LEFT 2

KEY_RIGHT 3

KEY_ENTER 4 also called FIRE sometimes

NO_KEY 5 special value disabling the wait for a key

ANY_KEY 6 matches any key

SOFT_KEY_1 7 soft key n°1 (usually below the screen to the left)

SOFT_KEY_2 8 soft key n°2 (usually below the screen to the right)

KEY_POUND 35 #

KEY_STAR 42 *

KEY_0 48

KEY_1 49

KEY_2 50

KEY_3 51

KEY_4 52

KEY_5 53

KEY_6 54

KEY_7 55

KEY_8 56

KEY_9 57

6.6 Encoder Configuration

6.6.1 Overview

The binary encoding has is defined using SDL in 12.

The next subclause describes the syntax for signalling the encoding configuration.

6.6.2 LASeR headers

6.6.2.1 Semantics

The LASeRHeader specifies the parsing and decoding configuration of a LASeR scene segment.

10 © ISO/IEC 2006 – All rights reserved

The LASeRUnitHeader specifies parameters that may change at the beginning of each LASeR access unit.

LASeR defines a way to partition scenes into incremental scene segments, allowing services to be built of

different scene segments, the first scene segment containing a NewScene update, the other scene segments

having the append bit set and not starting with a NewScene update, and being designed as addition to the

first scene segment.

LASeR defines an interface to persistent storage. The LASeR engine shall cache permanent streams and

selected scene information on a best effort basis. The principles behind this caching closely follow the state

caching mechanism in HTTP, commonly called cookies [RFC 2695]. Within the LASeR streams, there are two

commands that may be used. One command saves, associated with a string name called groupID, the values

of some attributes of some nodes. This storage is scoped by the domain-name and path computed from the

source using the fields in the LASeR header. The other command restores the attributes (if any) previously

saved under the given groupID, as scoped by the domain-name and path.

The stored values and permanent streams are scoped by the domain-name and path. That is, it is possible

for both “.acme.com” and “.widget.com” to store data under the same groupID and for that storage to be

distinct; similarly for “/user/laser-expert/” and “/user/laser-novice/” at “.acme.com” to save state under the

same groupID, and for those saved states to be distinct.

It is possible that there is state saved under the same groupID for more than one domain-name/path pair, and

that more than one of these match the request-URI. For example, if the request URI has domain “x.y.z.com”

and path “/demos/acme”, and there is state saved under the same groupID for domain “.y.z.com” and

“.z.com” then both sets of state apply. Under these circumstances, the saved states are ordered primarily by

preferring more specific domains (with more components) over less-specific, and then for states with the same

domain, and preferring more specific paths (with more components) over less-specific. Once the saved states

have been so ordered all the saved states are restored, starting with the least specific (least preferred) and

ending with the most specific (most preferred).

For example, if there is saved state under the same groupID for

1) domain-name “.acme.com”, path “/user/laser-expert/”;

2) domain-name “.acme.com”, path “/user/laser-expert/demo”;

3) domain-name “www.acme.com”, path “/user/laser-expert/”;

4) domain-name “www.acme.com”, path “/user/laser-expert/demo”

Then state (1) is restored, then state (2), (3) and (4), in that order. It is possible that these saved states do not

overwrite each other (different attributes or nodes), partially overwrite each other (some attributes in common)

or completely overwrite each other.

6.6.2.2 Attributes of LASeRHeader

• profile: this value signals the profile of LASeR that the scene segment starting with this LASeRHeader

adheres to.

• level: this value signals the level of LASeR which this scene segment starting with this LASeRHeader

adheres to.

-resolution

• resolution: this attribute is a number between -8 and 7 defining the coordinate resolution as 2 .

When reading a coordinate, the encoded value shall be multiplied by the coordinate resolution to obtain

the coordinate value expressed in pixels.

• Example:

• When resolution is 0, 4 or -2, the coordinate resolution is 1, 0.0625 or 4 respectively, and a encoded

coordinate value of 100 yields 100, 6.25 and 400 pixels respectively.

• timeResolution: this attribute is a 16-bits positive integer defining a resolution for time values (e.g. clock

values). When reading a time value from the bit stream, the encoded value shall be divided by this

number to obtain a time value in seconds. The default value for timeResolution is 1000.

© ISO/IEC 2006 – All rights reserved 11

• coordBits: this attribute defines the number of bits used for encoding coordinates. The default value is 12.

• scaleBits_minus_coordBits: this attribute defines the number of bits above coordBits used for encoding

scaling factors. The default value is 0.

• colorComponentBits: this attribute defines the number of bits used for encoding color components.

• append: this Boolean attribute defines whether the scene segment starting with this LASeRHeader is an

addition to the scene already present in the LASeR engine, or if it defines a new scene altogether. The

combination of append and the NewScene command is defined in Table 3.

Table 3 — Behavior of combinations of append and NewScene

type of the first LASeR Command

Append value Behavior

following the LASeRHeader

true

the new scene segment defines a new scene,

NewScene

the append value is ignored

false

true other command the previous scene is kept

false other command the behavior is undefined

• useFullRequestHost: this Boolean attribute indicates whether the full domain name of the request-host is

used (1) or the first component of the domain name is elided (0). For example, if the source material

came from “www.laser.com”, then this differentiates between associating the “service” with

“www.laser.com” and “.laser.com”. (Note the definition of local names in the RFC, and the possibility to

associate the “service” with locally loaded files, and that the domain name may be either

“.local” or “.local” in that case.

...

Questions, Comments and Discussion

Ask us and Technical Secretary will try to provide an answer. You can facilitate discussion about the standard in here.

Loading comments...