ASTM E2164-16

(Test Method)Standard Test Method for Directional Difference Test

Standard Test Method for Directional Difference Test

SIGNIFICANCE AND USE

5.1 The directional difference test determines with a given confidence level whether or not there is a perceivable difference in the intensity of a specified attribute between two samples, for example, when a change is made in an ingredient, a process, packaging, handling, or storage.

5.2 The directional difference test is inappropriate when evaluating products with sensory characteristics that are not easily specified, not commonly understood, or not known in advance. Other difference test methods such as the same-different test should be used.

5.3 A result of no significant difference in a specific attribute does not ensure that there are no differences between the two samples in other attributes or characteristics, nor does it indicate that the attribute is the same for both samples. It may merely indicate that the degree of difference is too low to be detected with the sensitivity (α, β, and Pmax) chosen for the test.

5.3.1 The method itself does not change whether the purpose of the test is to determine that two samples are perceivably different versus that the samples are not perceivably different. Only the selected values of Pmax, α, and β change. If the objective of the test is to determine if the two samples are perceivably different, then the value selected for α is typically smaller than the value selected for β. If the objective is to determine if no perceivable difference exists, then the value selected for β is typically smaller than the value selected for α and the value of Pmax needs to be stated explicitly.

SCOPE

1.1 This test method covers a procedure for comparing two products using a two-alternative forced-choice task.

1.2 This method is sometimes referred to as a paired comparison test or as a 2-AFC (alternative forced choice) test.

1.3 A directional difference test determines whether a difference exists in the perceived intensity of a specified sensory attribute between two samples.

1.4 Directional difference testing is limited in its application to a specified sensory attribute and does not directly determine the magnitude of the difference for that specific attribute. Assessors must be able to recognize and understand the specified attribute. A lack of difference in the specified attribute does not imply that no overall difference exists.

1.5 This test method does not address preference.

1.6 A directional difference test is a simple task for assessors, and is used when sensory fatigue or carryover is a concern. The directional difference test does not exhibit the same level of fatigue, carryover, or adaptation as multiple sample tests such as triangle or duo-trio tests. For detail on comparisons among the various difference tests, see Ennis (1), MacRae (2), and O'Mahony and Odbert (3).2

1.7 The procedure of the test described in this document consists of presenting a single pair of samples to the assessors.

1.8 This standard does not purport to address all of the safety concerns, if any, associated with its use. It is the responsibility of the user of this standard to establish appropriate safety and health practices and determine the applicability of regulatory limitations prior to use.

General Information

- Status

- Published

- Publication Date

- 30-Sep-2016

- Technical Committee

- E18 - Sensory Evaluation

- Drafting Committee

- E18.04 - Test Methods

Relations

- Effective Date

- 01-Oct-2016

- Effective Date

- 01-Apr-2022

- Effective Date

- 15-Oct-2019

- Effective Date

- 01-Oct-2018

- Effective Date

- 15-Jun-2018

- Effective Date

- 01-Oct-2017

- Effective Date

- 01-Oct-2017

- Refers

ASTM E1871-17 - Standard Guide for Serving Protocol for Sensory Evaluation of Foods and Beverages - Effective Date

- 01-Sep-2017

- Effective Date

- 01-May-2017

- Effective Date

- 01-Jun-2016

- Effective Date

- 01-Dec-2015

- Effective Date

- 01-Jun-2015

- Effective Date

- 15-Jan-2015

- Effective Date

- 15-Nov-2013

- Effective Date

- 15-Nov-2013

Overview

ASTM E2164-16: Standard Test Method for Directional Difference Test is an internationally recognized procedure developed by ASTM International for sensory evaluation. This standard focuses on determining whether a perceivable difference exists in the intensity of a specific sensory attribute between two samples. It is frequently applied when a product undergoes a change in ingredients, processes, packaging, handling, or storage and an objective assessment of sensory differences is needed.

The method employs a two-alternative forced-choice (2-AFC) approach-also known as a paired comparison test-to evaluate sensory characteristics. Importantly, ASTM E2164-16 is designed for scenarios where the attribute in question is clearly defined and understood by assessors, ensuring reliable and actionable results.

Key Topics

- Directional Difference Test: Utilizes a forced-choice approach to assess if one sample exhibits a higher or lower intensity of a designated sensory attribute compared to another.

- Specified Attribute: Testing is limited to attributes that are easily identifiable (e.g., sweetness, crispness, saltiness). Assessors must understand the attribute in question.

- Assessment Objective: Ideal for determining sensory changes due to ingredient modification, new processing techniques, or changes in storage conditions.

- Test Structure: Assessors are presented with a pair of coded samples and asked to identify which sample displays a greater intensity of the specific attribute.

- Statistical Confidence: Conclusions from the test are made at a pre-specified confidence level using statistical analysis, such as determining the minimum number of assessors or correct responses required for significance.

- Simplicity and Reliability: The test is straightforward for assessors to complete and is particularly suitable when sensory fatigue or carryover effects must be minimized compared to more complex tests.

Applications

ASTM E2164-16 is widely used across food and beverage, consumer goods, and materials industries. Practical applications include:

- Product Reformulation: Evaluating whether changes in recipe or formulation result in perceivable sensory differences (e.g., more crisp cereal, lower-salt soups).

- Quality Control: Monitoring production batches to ensure consistency in sensory attributes such as flavor, aroma, or texture.

- Process Modifications: Validating the sensory impact of new manufacturing, packaging, or storage processes.

- Supplier Comparisons: Objectively comparing raw materials or ingredient systems for sensory performance.

- Regulatory and Specification Compliance: Documenting that products meet consumer or regulatory expectations regarding specific sensory properties.

Laboratories and organizations that conduct routine sensory testing favor this standard for its clarity, robust statistical basis, and compatibility with high-throughput testing environments.

Related Standards

To enhance or complement the use of ASTM E2164-16, consider the following related documents:

- ASTM E253: Terminology Relating to Sensory Evaluation of Materials and Products

- ASTM E456: Terminology Relating to Quality and Statistics

- ASTM E1871: Guide for Serving Protocol for Sensory Evaluation of Foods and Beverages

- ISO 5495: Sensory Analysis-Methodology-Paired Comparison

These standards offer essential terminology, additional test methods, and procedural guidance for comprehensive sensory evaluation and accurate statistical analysis.

Conclusion:

ASTM E2164-16 offers a standardized, statistically valid methodology for directional difference testing that enables product developers, quality managers, and sensory scientists to make informed decisions about sensory attribute differences between products. With its focus on specificity, clarity, and reliability, it is a foundational tool for sensory evaluation in diverse industries.

Buy Documents

ASTM E2164-16 - Standard Test Method for Directional Difference Test

REDLINE ASTM E2164-16 - Standard Test Method for Directional Difference Test

Get Certified

Connect with accredited certification bodies for this standard

IMP NDT d.o.o.

Non-destructive testing services. Radiography, ultrasonic, magnetic particle, penetrant, visual inspection.

Inštitut za kovinske materiale in tehnologije

Institute of Metals and Technology. Materials testing, metallurgical analysis, NDT.

Q Techna d.o.o.

NDT and quality assurance specialist. 30+ years experience. NDT personnel certification per ISO 9712, nuclear and thermal power plant inspections, QA/

Sponsored listings

Frequently Asked Questions

ASTM E2164-16 is a standard published by ASTM International. Its full title is "Standard Test Method for Directional Difference Test". This standard covers: SIGNIFICANCE AND USE 5.1 The directional difference test determines with a given confidence level whether or not there is a perceivable difference in the intensity of a specified attribute between two samples, for example, when a change is made in an ingredient, a process, packaging, handling, or storage. 5.2 The directional difference test is inappropriate when evaluating products with sensory characteristics that are not easily specified, not commonly understood, or not known in advance. Other difference test methods such as the same-different test should be used. 5.3 A result of no significant difference in a specific attribute does not ensure that there are no differences between the two samples in other attributes or characteristics, nor does it indicate that the attribute is the same for both samples. It may merely indicate that the degree of difference is too low to be detected with the sensitivity (α, β, and Pmax) chosen for the test. 5.3.1 The method itself does not change whether the purpose of the test is to determine that two samples are perceivably different versus that the samples are not perceivably different. Only the selected values of Pmax, α, and β change. If the objective of the test is to determine if the two samples are perceivably different, then the value selected for α is typically smaller than the value selected for β. If the objective is to determine if no perceivable difference exists, then the value selected for β is typically smaller than the value selected for α and the value of Pmax needs to be stated explicitly. SCOPE 1.1 This test method covers a procedure for comparing two products using a two-alternative forced-choice task. 1.2 This method is sometimes referred to as a paired comparison test or as a 2-AFC (alternative forced choice) test. 1.3 A directional difference test determines whether a difference exists in the perceived intensity of a specified sensory attribute between two samples. 1.4 Directional difference testing is limited in its application to a specified sensory attribute and does not directly determine the magnitude of the difference for that specific attribute. Assessors must be able to recognize and understand the specified attribute. A lack of difference in the specified attribute does not imply that no overall difference exists. 1.5 This test method does not address preference. 1.6 A directional difference test is a simple task for assessors, and is used when sensory fatigue or carryover is a concern. The directional difference test does not exhibit the same level of fatigue, carryover, or adaptation as multiple sample tests such as triangle or duo-trio tests. For detail on comparisons among the various difference tests, see Ennis (1), MacRae (2), and O'Mahony and Odbert (3).2 1.7 The procedure of the test described in this document consists of presenting a single pair of samples to the assessors. 1.8 This standard does not purport to address all of the safety concerns, if any, associated with its use. It is the responsibility of the user of this standard to establish appropriate safety and health practices and determine the applicability of regulatory limitations prior to use.

SIGNIFICANCE AND USE 5.1 The directional difference test determines with a given confidence level whether or not there is a perceivable difference in the intensity of a specified attribute between two samples, for example, when a change is made in an ingredient, a process, packaging, handling, or storage. 5.2 The directional difference test is inappropriate when evaluating products with sensory characteristics that are not easily specified, not commonly understood, or not known in advance. Other difference test methods such as the same-different test should be used. 5.3 A result of no significant difference in a specific attribute does not ensure that there are no differences between the two samples in other attributes or characteristics, nor does it indicate that the attribute is the same for both samples. It may merely indicate that the degree of difference is too low to be detected with the sensitivity (α, β, and Pmax) chosen for the test. 5.3.1 The method itself does not change whether the purpose of the test is to determine that two samples are perceivably different versus that the samples are not perceivably different. Only the selected values of Pmax, α, and β change. If the objective of the test is to determine if the two samples are perceivably different, then the value selected for α is typically smaller than the value selected for β. If the objective is to determine if no perceivable difference exists, then the value selected for β is typically smaller than the value selected for α and the value of Pmax needs to be stated explicitly. SCOPE 1.1 This test method covers a procedure for comparing two products using a two-alternative forced-choice task. 1.2 This method is sometimes referred to as a paired comparison test or as a 2-AFC (alternative forced choice) test. 1.3 A directional difference test determines whether a difference exists in the perceived intensity of a specified sensory attribute between two samples. 1.4 Directional difference testing is limited in its application to a specified sensory attribute and does not directly determine the magnitude of the difference for that specific attribute. Assessors must be able to recognize and understand the specified attribute. A lack of difference in the specified attribute does not imply that no overall difference exists. 1.5 This test method does not address preference. 1.6 A directional difference test is a simple task for assessors, and is used when sensory fatigue or carryover is a concern. The directional difference test does not exhibit the same level of fatigue, carryover, or adaptation as multiple sample tests such as triangle or duo-trio tests. For detail on comparisons among the various difference tests, see Ennis (1), MacRae (2), and O'Mahony and Odbert (3).2 1.7 The procedure of the test described in this document consists of presenting a single pair of samples to the assessors. 1.8 This standard does not purport to address all of the safety concerns, if any, associated with its use. It is the responsibility of the user of this standard to establish appropriate safety and health practices and determine the applicability of regulatory limitations prior to use.

ASTM E2164-16 is classified under the following ICS (International Classification for Standards) categories: 19.020 - Test conditions and procedures in general. The ICS classification helps identify the subject area and facilitates finding related standards.

ASTM E2164-16 has the following relationships with other standards: It is inter standard links to ASTM E2164-08, ASTM E456-13a(2022)e1, ASTM E253-19, ASTM E253-18a, ASTM E253-18, ASTM E456-13A(2017)e3, ASTM E456-13A(2017)e1, ASTM E1871-17, ASTM E253-17, ASTM E253-16, ASTM E253-15b, ASTM E253-15a, ASTM E253-15, ASTM E456-13ae1, ASTM E456-13ae3. Understanding these relationships helps ensure you are using the most current and applicable version of the standard.

ASTM E2164-16 is available in PDF format for immediate download after purchase. The document can be added to your cart and obtained through the secure checkout process. Digital delivery ensures instant access to the complete standard document.

Standards Content (Sample)

This international standard was developed in accordance with internationally recognized principles on standardization established in the Decision on Principles for the

Development of International Standards, Guides and Recommendations issued by the World Trade Organization Technical Barriers to Trade (TBT) Committee.

Designation: E2164 − 16

Standard Test Method for

Directional Difference Test

This standard is issued under the fixed designation E2164; the number immediately following the designation indicates the year of

original adoption or, in the case of revision, the year of last revision. A number in parentheses indicates the year of last reapproval. A

superscript epsilon (´) indicates an editorial change since the last revision or reapproval.

1. Scope 2. Referenced Documents

1.1 This test method covers a procedure for comparing two 2.1 ASTM Standards:

products using a two-alternative forced-choice task. E253 Terminology Relating to Sensory Evaluation of Mate-

rials and Products

1.2 This method is sometimes referred to as a paired

E456 Terminology Relating to Quality and Statistics

comparison test or as a 2-AFC (alternative forced choice) test.

E1871 Guide for Serving Protocol for Sensory Evaluation of

1.3 A directional difference test determines whether a dif-

Foods and Beverages

ference exists in the perceived intensity of a specified sensory

2.2 ISO Standard:

attribute between two samples.

ISO 5495 Sensory Analysis—Methodology—Paired Com-

1.4 Directionaldifferencetestingislimitedinitsapplication parison

to a specified sensory attribute and does not directly determine

3. Terminology

the magnitude of the difference for that specific attribute.

Assessors must be able to recognize and understand the

3.1 For definition of terms relating to sensory analysis, see

specifiedattribute.Alackofdifferenceinthespecifiedattribute Terminology E253, and for terms relating to statistics, see

does not imply that no overall difference exists.

Terminology E456.

1.5 This test method does not address preference. 3.2 Definitions of Terms Specific to This Standard:

3.2.1 α (alpha) risk—the probability of concluding that a

1.6 A directional difference test is a simple task for

perceptibledifferenceexistswhen,inreality,onedoesnot(also

assessors, and is used when sensory fatigue or carryover is a

known as type I error or significance level).

concern. The directional difference test does not exhibit the

same level of fatigue, carryover, or adaptation as multiple 3.2.2 β (beta) risk—the probability of concluding that no

sample tests such as triangle or duo-trio tests. For detail on perceptible difference exists when, in reality, one does (also

comparisons among the various difference tests, see Ennis (1), known as type II error).

MacRae (2), and O’Mahony and Odbert (3).

3.2.3 one-sided test—a test in which the researcher has an a

priori expectation concerning the direction of the difference. In

1.7 The procedure of the test described in this document

this case, the alternative hypothesis will express that the

consists of presenting a single pair of samples to the assessors.

perceived intensity of the specified sensory attribute is greater

1.8 This standard does not purport to address all of the

(that is,A>B) (or lower (that is,A

safety concerns, if any, associated with its use. It is the

the other.

responsibility of the user of this standard to establish appro-

3.2.4 two-sided test—a test in which the researcher does not

priate safety and health practices and determine the applica-

have any a priori expectation concerning the direction of the

bility of regulatory limitations prior to use.

difference. In this case, the alternative hypothesis will express

that the perceived intensity of the specified sensory attribute is

This test method is under the jurisdiction ofASTM Committee E18 on Sensory

different from one product to the other (that is, A≠B).

Evaluation and is the direct responsibility of Subcommittee E18.04 on Fundamen-

tals of Sensory.

Current edition approved Oct. 1, 2016. Published October 2016. Originally

approved in 2001. Last previous edition approved in 2008 as E2164 – 08. DOI: For referenced ASTM standards, visit the ASTM website, www.astm.org, or

10.1520/E2164-16. contact ASTM Customer Service at service@astm.org. For Annual Book of ASTM

The boldface numbers in parentheses refer to the list of references at the end of Standards volume information, refer to the standard’s Document Summary page on

this standard. the ASTM website.

Copyright © ASTM International, 100 Barr Harbor Drive, PO Box C700, West Conshohocken, PA 19428-2959. United States

E2164 − 16

3.2.5 common responses—for a one-sided test, the number advance. Other difference test methods such as the same-

of assessors selecting the sample expected to have a higher different test should be used.

intensity of the specified sensory attribute. Common responses

5.3 Aresultofnosignificantdifferenceinaspecificattribute

couldalsobedefinedintermsoflowerintensityoftheattribute

does not ensure that there are no differences between the two

if it is more relevant. For a two-sided test, the larger number of

samples in other attributes or characteristics, nor does it

assessors selecting sample A or B.

indicate that the attribute is the same for both samples. It may

3.2.6 P —Atest sensitivity parameter established prior to

merely indicate that the degree of difference is too low to be

max

testing and used along with the selected values of α and β to detectedwiththesensitivity(α,β,andP )chosenforthetest.

max

determine the number of assessors needed in a study. P is

5.3.1 The method itself does not change whether the pur-

max

the proportion of common responses that the researcher wants pose of the test is to determine that two samples are perceiv-

the test to be able to detect with a probability of 1 β. For

ably different versus that the samples are not perceivably

example, if a researcher wants to be 90 % confident of different. Only the selected values of P , α, and β change. If

max

detectinga60:40splitinadirectionaldifferencetest,thenP

the objective of the test is to determine if the two samples are

max

= 60% and β = 0.10. P is relative to a population of judges perceivably different, then the value selected for α is typically

max

that has to be defined based on the characteristics of the panel smaller than the value selected for β. If the objective is to

used for the test. For instance, if the panel consists of trained determine if no perceivable difference exists, then the value

assessors,P willberepresentativeofapopulationoftrained selected for β is typically smaller than the value selected for α

max

assessors, but not of consumers. and the value of P needs to be stated explicitly.

max

3.2.7 P —the proportion of common responses that is cal-

c 6. Apparatus

culated from the test data.

6.1 Carry out the test under conditions that prevent contact

3.2.8 product—the material to be evaluated.

between assessors until the evaluations have been completed,

for example, booths that comply with MNL 60 (4).

3.2.9 sample—the unit of product prepared, presented, and

evaluated in the test.

6.2 Sample preparation and serving sizes should comply

3.2.10 sensitivity—a general term used to summarize the with Guide E1871, or see Herz and Cupchik (5) or Todrank et

al (6).

performance characteristics of the test. The sensitivity of the

test is rigorously defined, in statistical terms, by the values

7. Assessors

selected for α, β, and P .

max

7.1 All assessors must be familiar with the mechanics of the

directional difference test (format, task, and procedure of

4. Summary of Test Method

evaluation). For directional difference testing, assessors must

4.1 Clearly define the test objective in writing.

be able to recognize and quantify the specified attribute.

4.2 Choose the number of assessors based on the sensitivity

7.2 The characteristics of the assessors used define the

desired for the test. The sensitivity of the test is, in part, a

scope of the conclusions. Experience and familiarity with the

function of two competing risks—the risk of declaring a

product or the attribute may increase the sensitivity of an

difference in the attribute when there is none (that is, α-risk)

assessor and may therefore increase the likelihood of finding a

and the risk of not declaring a difference in the attribute when

significant difference. Monitoring the performance of assessors

there is one (that is, β-risk).Acceptable values of α and β vary

over time may be useful for selecting assessors with increased

depending on the test objective. The values should be agreed

sensitivity. Consumers can be used, as long as they are familiar

upon by all parties affected by the results of the test.

with the format of the directional difference test. If a sufficient

number of employees are available for this test, they too can

4.3 In directional difference testing, assessors receive a pair

serve as assessors. If trained descriptive assessors are used,

of coded samples and are informed of the attribute to be

there should be sufficient numbers of them to meet the

evaluated.The assessors report which they believe to be higher

agreed-upon risks appropriate to the project. Mixing the types

or lower in intensity of the specified attribute, even if the

of assessors is not recommended, given the potential differ-

selection is based only on a guess.

ences in sensitivity of each type of assessor.

4.4 Results are tallied and significance determined by direct

7.3 The degree of training for directional difference testing

calculation or reference to a statistical table.

should be addressed prior to test execution. Attribute-specific

training may include a preliminary presentation of differing

5. Significance and Use

levels of the attribute, either shown external to the product or

5.1 The directional difference test determines with a given

shown within the product, for example, as a solution or within

confidence level whether or not there is a perceivable differ-

a product formulation. If the test concerns the detection of a

ence in the intensity of a specified attribute between two

particular taint, consider the inclusion of samples during

samples, for example, when a change is made in an ingredient,

training that demonstrate its presence and absence. Such

a process, packaging, handling, or storage.

demonstration will increase the assessors’ acuity for the taint

5.2 The directional difference test is inappropriate when (see STP758 (7) for details).Allow adequate time between the

evaluating products with sensory characteristics that are not exposure to the training samples and the actual test to avoid

easily specified, not commonly understood, or not known in carryover or fatigue.

E2164 − 16

7.4 During the test sessions, avoid giving information about increasing the number of assessors increases the likelihood of

product identity, expected treatment effects, or individual detecting small differences. Thus, one should expect to use

performance until all testing is comple. larger numbers of assessors when trying to demonstrate that

samples are similar compared to when one is trying to show

8. Number of Assessors they are different.

8.1 Choose the number of assessors to yield the sensitivity

9. Procedure

called for by the test objectives. The sensitivity of the test is a

9.1 Prepare serving order worksheet and ballot in advance

function of four factors: α-risk, β-risk, maximum allowable

proportionofcommonresponses(P ),andwhetherthetestis of the test to ensure a balanced order of sample presentation of

max

the two samples, A and B. Balance the serving sequences AB

one-sided or two-sided.

and BA across all assessors. Serving order worksheets should

8.2 Prior to conducting the test, decide if the test is

also include complete sample identification information. See

one-sidedortwo-sidedandselectvaluesforα,β,andP .The

max

Appendix X1.

following can be considered as general guidelines:

9.2 It is critical to the validity of the test that assessors

8.2.1 One-sided versus two-sided: The test is one-sided if

only one direction of difference is critical to the findings. For cannot identify the samples from the way in which they are

presented. For example, in a test evaluating flavor differences,

example, the test is one-sided if the objective is to confirm that

the sample with more sugar is sweeter than the sample with one should avoid any subtle differences in temperature or

appearance caused by factors such as the time sequence of

less sugar. The test is two-sided if both possible directions of

preparation. It may be possible to mask color differences using

difference are important. For example, the test is two-sided if

light filters, subdued illumination or colored vessels. Code the

the objective of the test is to determine which of two samples

vessels containing the samples in a uniform manner using

is sweeter.

3-digit numbers chosen at random for each test. Prepare

8.2.2 When testing for a difference, for example, when the

samples out of sight and in an identical manner: same

researcherwantstotakeonlyasmallchanceofconcludingthat

apparatus, same vessels, same quantities of sample (see Guide

a difference exists when it does not, the most commonly used

E1871-91).

values for α-risk and β-risk are α = 0.05 and β = 0.20. These

values can be adjusted on a case-by-case basis to reflect the

9.3 Present each pair of samples simultaneously whenever

sensitivity desired versus the number of assessors available.

possible, following the same spatial arrangement for each

When testing for a difference with a limited number of

assessor (on a line to be sampled always from left to right, or

assessors, hold the α-risk at a relatively small value and allow

fromfronttoback,etc.).Withinthepair,assessorsaretypically

the β-risk to increase to control the risk of falsely concluding

allowed to make repeated evaluations of each sample as

that a difference is present.

desired. If the conditions of the test require the prevention of

8.2.3 When testing for similarity, for example, when the

repeat evaluations, for example, if samples are bulky, leave an

researcher wants to take only a small chance of missing a

aftertaste, or show slight differences in appearance that cannot

difference that is there, the most commonly used values for

be masked, present the samples monadically (or sequential

α-riskandβ-riskareα = 0.20andβ = 0.05.Thesevaluescanbe

monadic) and do not allow repeated evaluations.

adjusted on a case-by-case basis to reflect the sensitivity

9.4 Ask only one question about the samples. The selection

desired vs. the number of assessors available.When testing for

theassessorhasmadeontheinitialquestionmaybiasthereply

similarity with a limited number of assessors, hold theβ-risk at

to subsequent questions about the samples. Responses to

arelativelysmallvalueandallowtheα-risktoincreaseinorder

additional questions may be obtained through separate tests for

to control the risk of missing a difference that is present.

preference, acceptance, degree of difference, etc. See Cham-

8.2.4 For P , the proportion of common responses falls

max

bersandBakerWolf (9).Asectionsolicitingcommentsmaybe

into three ranges:

included following the initial forced-choice question.

P < 55 % represents “small” values;

max

55 %# P # 65 % represents “medium-sized” values; and

9.5 The directional difference test is a forced-choice proce-

max

P > 65 % represents “large” values.

max

dure; assessors are not allowed the option of reporting “no

8.3 Having defined the required sensitivity for the test using

difference.”An assessor who detects no difference between the

8.2, use Table 1 or Table 2 to determine the number of

samples should be instructed to make a guess and select one of

assessors necessary. Enter the table in the section correspond-

the samples, and can indicate in the comments section that the

ing to the selected value of P and the column corresponding

selection was only a guess.

max

to the selected value of β. The minimum required number of

assessors is found in the row corresponding to the selected 10. Analysis and Interpretation of Results

value of α. Alternatively, Table 1 or Table 2 can be used to

10.1 The procedure used to analyze the results of a direc-

develop a set of values for P , α, and β that provide

max

tional difference test depends on the number of assessors.

acceptable sensitivity while maintaining the number of asses-

10.1.1 If the number of assessors is equal to or greater than

sors within practical limits.

the value given in Table 1 (for a one-sided alternative) or Table

8.4 Often in practice, the number of assessors is determined 2(foratwo-sidedalternative)forthechosenvaluesofα,β,and

by material conditions (e.g., duration of the experiment, P , then use Table 3 to analyze the data obtained from a

max

number of available assessors, quantity of sample). However, one-sided test and Table 4 to analyze the data from a two-sided

E2164 − 16

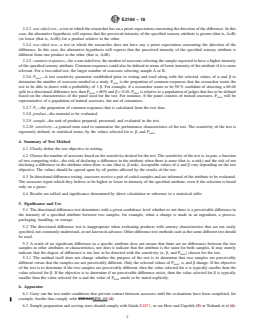

TABLE 1 Number of Assessors Needed for a Directional Difference Test One-Sided Alternative

NOTE 1—The values recorded in this table have been rounded to the nearest whole number evenly divisible by two to allow for equal presentation of

both pair combinations (AB and BA).

NOTE 2—Adapted from Meilgaard et al (8).

β

α 0.50 0.40 0.30 0.20 0.10 0.05 0.01 0.001

0.50 P =75 % 2 4 4 4 8 12 20 34

max

0.40 2 4 4 6 10 14 28 42

0.30 2 6 810142030 48

0.20 6 6 10 12 20 26 40 58

0.10 10 10 14 20 26 34 48 70

0.05 114 16 1824344258 82

0.01 22 28 34 40 50 60 80 108

0.001 38 44 52 62 72 84 108 140

0.50 P=70% 4 4 4 8121832 60

max

0.40 4 4 6 8 14 26 42 70

0.30 6 8 10 14 22 28 50 78

0.20 6 10 1220304060 94

0.10 14 20 22 28 40 54 80 114

0.05 18 24 30 38 54 68 94 132

0.01 36 42 52 64 80 96 130 174

0.001 62 72 82 96 118 136 176 228

0.50 P =65 % 4 4 4 8 18 32 62 102

max

0.40 4 6 8 14 30 42 76 120

0.30 8 10 1424405488 144

0.20 10 18 22 32 50 68 110 166

0.10 22 28 38 54 72 96 146 208

0.05 30 42 54 70 94 120 174 244

0.01 64 78 90 112 144 174 236 320

0.001 108 126 144 172 210 246 318 412

0.50 P =60 % 4 4 8 18 42 68 134 238

max

0.40 6 10 24 36 60 94 172 282

0.30 12 22 30 50 84 120 206 328

0.20 22 32 50 78 112 158 254 384

0.10 46 66 86 116 168 214 322 472

0.05 72 94 120 158 214 268 392 554

0.01 142 168 208 252 326 392 536 726

0.001 242 282 328 386 480 556 732 944

0.50 P =55 % 4 8 28 74 164 272 542 952

max

0.40 10 36 62 124 238 362 672 1124

0.30 30 72 118 200 334 480 810 1302

0.20 82 130 194 294 452 618 1006 1556

0.10 170 240 338 462 658 862 1310 1906

0.05 282 370 476 620 866 1092 1584 2238

0.01 550 666 820 1008 1302 1582 2170 2928

0.001 962 1126 1310 1552 1908 2248 2938 3812

test. If the number of common responses is equal to or greater test or Table 4 to analyze the data obtained from a two-sided

than the number given in the table, conclude that a perceptible

test. If the number of common responses is equal to or greater

attribute difference exists between the samples. If the number than the number given in the table, conclude that a perceptible

of common responses is less than the number given in the

attribute difference exists between the samples at theα-level of

table, conclude that the samples are similar in attribute inten-

significance.

sity and that no more than P of the population would

max

10.1.3 Ifthenumberofassessorsislessthanthevaluegiven

perceive the difference at a confidence level equal to 1-β.

inTable1orTable2forthechosenvaluesofα,β,andP and

max

Again, the conclusions are based on the risks accepted when

the researcher is primarily interested in testing for similarity,

the sensitivity (that is, P , α, and β) was selected in

max

then a one-sided confidence interval is used to analyze the data

determining the number of assessors.

obtained from the test. The calculations are as follows:

10.1.2 Ifthenumberofassessorsislessthanthevaluegiven

P 5 c/n

inTable1orTable2forthechosenvaluesofα,β,andP and c

max

the researcher is primarily interested in testing for a difference,

S ~standard error of P ! 5 =P ~1 2 P !/n

c c c c

then use Table 3 to analyze the data obtained from a one-sided confidence limit 5 P 1z S

c β c

E2164 − 16

TABLE 2 Number of Assessors Needed for a Directional Difference Test Two-Sided Alternative

NOTE 1—The values recorded in this table have been rounded to the nearest whole number evenly divisible by two to allow for equal presentation of

both pair combinations (AB and BA).

NOTE 2—Adapted from Meilgaard et al (8).

...

This document is not an ASTM standard and is intended only to provide the user of an ASTM standard an indication of what changes have been made to the previous version. Because

it may not be technically possible to adequately depict all changes accurately, ASTM recommends that users consult prior editions as appropriate. In all cases only the current version

of the standard as published by ASTM is to be considered the official document.

Designation: E2164 − 08 E2164 − 16

Standard Test Method for

Directional Difference Test

This standard is issued under the fixed designation E2164; the number immediately following the designation indicates the year of

original adoption or, in the case of revision, the year of last revision. A number in parentheses indicates the year of last reapproval. A

superscript epsilon (´) indicates an editorial change since the last revision or reapproval.

1. Scope

1.1 This test method covers a procedure for comparing two products using a two-alternative forced-choice task.

1.2 This method is sometimes referred to as a paired comparison test or as a 2-AFC (alternative forced choice) test.

1.3 A directional difference test determines whether a difference exists in the perceived intensity of a specified sensory attribute

between two samples.

1.4 Directional difference testing is limited in its application to a specified sensory attribute and does not directly determine the

magnitude of the difference for that specific attribute. Assessors must be able to recognize and understand the specified attribute.

A lack of difference in the specified attribute does not imply that no overall difference exists.

1.5 This test method does not address preference.

1.6 A directional difference test is a simple task for assessors, and is used when sensory fatigue or carryover is a concern. The

directional difference test does not exhibit the same level of fatigue, carryover, or adaptation as multiple sample tests such as

triangle or duo-trio tests. For detail on comparisons among the various difference tests, see Ennis (1), MacRae (2), and O’Mahony

and Odbert (3).

1.7 The procedure of the test described in this document consists of presenting a single pair of samples to the assessors.

1.8 This standard does not purport to address all of the safety concerns, if any, associated with its use. It is the responsibility

of the user of this standard to establish appropriate safety and health practices and determine the applicability of regulatory

limitations prior to use.

2. Referenced Documents

2.1 ASTM Standards:

E253 Terminology Relating to Sensory Evaluation of Materials and Products

E456 Terminology Relating to Quality and Statistics

E1871 Guide for Serving Protocol for Sensory Evaluation of Foods and Beverages

2.2 ISO Standard:

ISO 5495 Sensory Analysis—Methodology—Paired Comparison

3. Terminology

3.1 For definition of terms relating to sensory analysis, see Terminology E253, and for terms relating to statistics, see

Terminology E456.

3.2 Definitions of Terms Specific to This Standard:

3.2.1 α (alpha) risk—the probability of concluding that a perceptible difference exists when, in reality, one does not (also known

as type I error or significance level).

3.2.2 β (beta) risk—the probability of concluding that no perceptible difference exists when, in reality, one does (also known

as type II error).

This test method is under the jurisdiction of ASTM Committee E18 on Sensory Evaluation and is the direct responsibility of Subcommittee E18.04 on Fundamentals

of Sensory.

Current edition approved March 1, 2008Oct. 1, 2016. Published April 2008October 2016. Originally approved in 2001. Last previous edition approved in 20072008 as

E2164 – 01 (2007).E2164 – 08. DOI: 10.1520/E2164-08.10.1520/E2164-16.

The boldface numbers in parentheses refer to the list of references at the end of this standard.

For referenced ASTM standards, visit the ASTM website, www.astm.org, or contact ASTM Customer Service at service@astm.org. For Annual Book of ASTM Standards

volume information, refer to the standard’s Document Summary page on the ASTM website.

Copyright © ASTM International, 100 Barr Harbor Drive, PO Box C700, West Conshohocken, PA 19428-2959. United States

E2164 − 16

3.2.3 one-sided test—a test in which the researcher has an a priori expectation concerning the direction of the difference. In this

case, the alternative hypothesis will express that the perceived intensity of the specified sensory attribute is greater (that is, A>B)

(or lower (that is, A

3.2.4 two-sided test—a test in which the researcher does not have any a priori expectation concerning the direction of the

difference. In this case, the alternative hypothesis will express that the perceived intensity of the specified sensory attribute is

different from one product to the other (that is, A≠B).

3.2.5 common responses—for a one-sided test, the number of assessors selecting the sample expected to have a higher intensity

of the specified sensory attribute. Common responses could also be defined in terms of lower intensity of the attribute if it is more

relevant. For a two-sided test, the larger number of assessors selecting sample A or B.

3.2.6 P —A test sensitivity parameter established prior to testing and used along with the selected values of α and β to

max

determine the number of assessors needed in a study. P is the proportion of common responses that the researcher wants the

max

test to be able to detect with a probability of 1 β. For example, if a researcher wants to be 90 % confident of detecting a 60:40

split in a directional difference test, then P = 60% and β = 0.10. P is relative to a population of judges that has to be defined

max max

based on the characteristics of the panel used for the test. For instance, if the panel consists of trained assessors, P will be

max

representative of a population of trained assessors, but not of consumers.

3.2.7 P —the proportion of common responses that is calculated from the test data.

c

3.2.8 product—the material to be evaluated.

3.2.9 sample—the unit of product prepared, presented, and evaluated in the test.

3.2.10 sensitivity—a general term used to summarize the performance characteristics of the test. The sensitivity of the test is

rigorously defined, in statistical terms, by the values selected for α, β, and P .

max

4. Summary of Test Method

4.1 Clearly define the test objective in writing.

4.2 Choose the number of assessors based on the sensitivity desired for the test. The sensitivity of the test is, in part, a function

of two competing risks—the risk of declaring a difference in the attribute when there is none (that is, α-risk) and the risk of not

declaring a difference in the attribute when there is one (that is, β-risk). Acceptable values of α and β vary depending on the test

objective. The values should be agreed upon by all parties affected by the results of the test.

4.3 In directional difference testing, assessors receive a pair of coded samples and are informed of the attribute to be evaluated.

The assessors report which they believe to be higher or lower in intensity of the specified attribute, even if the selection is based

only on a guess.

4.4 Results are tallied and significance determined by direct calculation or reference to a statistical table.

5. Significance and Use

5.1 The directional difference test determines with a given confidence level whether or not there is a perceivable difference in

the intensity of a specified attribute between two samples, for example, when a change is made in an ingredient, a process,

packaging, handling, or storage.

5.2 The directional difference test is inappropriate when evaluating products with sensory characteristics that are not easily

specified, not commonly understood, or not known in advance. Other difference test methods such as the same-different test should

be used.

5.3 A result of no significant difference in a specific attribute does not ensure that there are no differences between the two

samples in other attributes or characteristics, nor does it indicate that the attribute is the same for both samples. It may merely

indicate that the degree of difference is too low to be detected with the sensitivity (α, β, and P ) chosen for the test.

max

5.3.1 The method itself does not change whether the purpose of the test is to determine that two samples are perceivably

different versus that the samples are not perceivably different. Only the selected values of P , α, and β change. If the objective

max

of the test is to determine if the two samples are perceivably different, then the value selected for α is typically smaller than the

value selected for β. If the objective is to determine if no perceivable difference exists, then the value selected for β is typically

smaller than the value selected for α and the value of P needs to be stated explicitly.

max

6. Apparatus

6.1 Carry out the test under conditions that prevent contact between assessors until the evaluations have been completed, for

example, booths that comply with STP 913MNL 60 (4).

6.2 Sample preparation and serving sizes should comply with Guide E1871, or see Herz and Cupchik (5) or Todrank et al (6).

E2164 − 16

7. Assessors

7.1 All assessors must be familiar with the mechanics of the directional difference test (format, task, and procedure of

evaluation). For directional difference testing, assessors must be able to recognize and quantify the specified attribute.

7.2 The characteristics of the assessors used define the scope of the conclusions. Experience and familiarity with the product

or the attribute may increase the sensitivity of an assessor and may therefore increase the likelihood of finding a significant

difference. Monitoring the performance of assessors over time may be useful for selecting assessors with increased sensitivity.

Consumers can be used, as long as they are familiar with the format of the directional difference test. If a sufficient number of

employees are available for this test, they too can serve as assessors. If trained descriptive assessors are used, there should be

sufficient numbers of them to meet the agreed-upon risks appropriate to the project. Mixing the types of assessors is not

recommended, given the potential differences in sensitivity of each type of assessor.

7.3 The degree of training for directional difference testing should be addressed prior to test execution. Attribute-specific

training may include a preliminary presentation of differing levels of the attribute, either shown external to the product or shown

within the product, for example, as a solution or within a product formulation. If the test concerns the detection of a particular taint,

consider the inclusion of samples during training that demonstrate its presence and absence. Such demonstration will increase the

assessors’ acuity for the taint (see STP 758 (7) for details). Allow adequate time between the exposure to the training samples and

the actual test to avoid carryover or fatigue.

7.4 During the test sessions, avoid giving information about product identity, expected treatment effects, or individual

performance until all testing is comple.

8. Number of Assessors

8.1 Choose the number of assessors to yield the sensitivity called for by the test objectives. The sensitivity of the test is a

function of four factors: α-risk, β-risk, maximum allowable proportion of common responses (P ), and whether the test is

max

one-sided or two-sided.

8.2 Prior to conducting the test, decide if the test is one-sided or two-sided and select values for α, β, and P . The following

max

can be considered as general guidelines:

8.2.1 One-sided versus two-sided: The test is one-sided if only one direction of difference is critical to the findings. For example,

the test is one-sided if the objective is to confirm that the sample with more sugar is sweeter than the sample with less sugar. The

test is two-sided if both possible directions of difference are important. For example, the test is two-sided if the objective of the

test is to determine which of two samples is sweeter.

8.2.2 When testing for a difference, for example, when the researcher wants to take only a small chance of concluding that a

difference exists when it does not, the most commonly used values for α-risk and β-risk are α = 0.05 and β = 0.20. These values

can be adjusted on a case-by-case basis to reflect the sensitivity desired versus the number of assessors available. When testing

for a difference with a limited number of assessors, hold the α-risk at a relatively small value and allow the β-risk to increase to

control the risk of falsely concluding that a difference is present.

8.2.3 When testing for similarity, for example, when the researcher wants to take only a small chance of missing a difference

that is there, the most commonly used values for α-risk and β-risk are α = 0.20 and β = 0.05. These values can be adjusted on a

case-by-case basis to reflect the sensitivity desired vs. the number of assessors available. When testing for similarity with a limited

number of assessors, hold the β-risk at a relatively small value and allow the α-risk to increase in order to control the risk of missing

a difference that is present.

8.2.4 For P , the proportion of common responses falls into three ranges:

max

P < 55 % represents “small” values;

max

55 % # P # 65 % represents “medium-sized” values; and

max

P > 65 % represents “large” values.

max

8.3 Having defined the required sensitivity for the test using 8.2, use Table 1 or Table 2 to determine the number of assessors

necessary. Enter the table in the section corresponding to the selected value of P and the column corresponding to the selected

max

value of β. The minimum required number of assessors is found in the row corresponding to the selected value of α. Alternatively,

Table 1 or Table 2 can be used to develop a set of values for P , α, and β that provide acceptable sensitivity while maintaining

max

the number of assessors within practical limits.

8.4 Often in practice, the number of assessors is determined by material conditions (e.g., duration of the experiment, number

of available assessors, quantity of sample). However, increasing the number of assessors increases the likelihood of detecting small

differences. Thus, one should expect to use larger numbers of assessors when trying to demonstrate that samples are similar

compared to when one is trying to show they are different.

9. Procedure

9.1 Prepare serving order worksheet and ballot in advance of the test to ensure a balanced order of sample presentation of the

two samples, A and B. Balance the serving sequences AB and BA across all assessors. Serving order worksheets should also

include complete sample identification information. See Appendix X1.

E2164 − 16

TABLE 1 Number of Assessors Needed for a Directional Difference Test One-Sided Alternative

NOTE 1—The values recorded in this table have been rounded to the nearest whole number evenly divisible by two to allow for equal presentation of

both pair combinations (AB and BA).

NOTE 2—Adapted from Meilgaard et al (8).

β

α 0.50 0.40 0.30 0.20 0.10 0.05 0.01 0.001

0.50 P =75 % 2 4 4 4 8 12 20 34

max

0.40 2 4 4 6 10 14 28 42

0.30 2 6 8 10 14 20 30 48

0.20 6 6 10 12 20 26 40 58

0.10 10 10 14 20 26 34 48 70

0.05 114 16 18 24 34 42 58 82

0.01 22 28 34 40 50 60 80 108

0.001 38 44 52 62 72 84 108 140

0.50 P =70 % 4 4 4 8 12 18 32 60

max

0.40 4 4 6 8 14 26 42 70

0.30 6 8 10 14 22 28 50 78

0.20 6 10 12 20 30 40 60 94

0.10 14 20 22 28 40 54 80 114

0.05 18 24 30 38 54 68 94 132

0.01 36 42 52 64 80 96 130 174

0.001 62 72 82 96 118 136 176 228

0.50 P =65 % 4 4 4 8 18 32 62 102

max

0.40 4 6 8 14 30 42 76 120

0.30 8 10 14 24 40 54 88 144

0.20 10 18 22 32 50 68 110 166

0.10 22 28 38 54 72 96 146 208

0.05 30 42 54 70 94 120 174 244

0.01 64 78 90 112 144 174 236 320

0.001 108 126 144 172 210 246 318 412

0.50 P =60 % 4 4 8 18 42 68 134 238

max

0.40 6 10 24 36 60 94 172 282

0.30 12 22 30 50 84 120 206 328

0.20 22 32 50 78 112 158 254 384

0.10 46 66 86 116 168 214 322 472

0.05 72 94 120 158 214 268 392 554

0.01 142 168 208 252 326 392 536 726

0.001 242 282 328 386 480 556 732 944

0.50 P =55 % 4 8 28 74 164 272 542 952

max

0.40 10 36 62 124 238 362 672 1124

0.30 30 72 118 200 334 480 810 1302

0.20 82 130 194 294 452 618 1006 1556

0.10 170 240 338 462 658 862 1310 1906

0.05 282 370 476 620 866 1092 1584 2238

0.01 550 666 820 1008 1302 1582 2170 2928

0.001 962 1126 1310 1552 1908 2248 2938 3812

9.2 It is critical to the validity of the test that assessors cannot identify the samples from the way in which they are presented.

For example, in a test evaluating flavor differences, one should avoid any subtle differences in temperature or appearance caused

by factors such as the time sequence of preparation. It may be possible to mask color differences using light filters, subdued

illumination or colored vessels. Code the vessels containing the samples in a uniform manner using 3-digit numbers chosen at

random for each test. Prepare samples out of sight and in an identical manner: same apparatus, same vessels, same quantities of

sample (see Guide E1871-91).

9.3 Present each pair of samples simultaneously whenever possible, following the same spatial arrangement for each assessor

(on a line to be sampled always from left to right, or from front to back, etc.). Within the pair, assessors are typically allowed to

make repeated evaluations of each sample as desired. If the conditions of the test require the prevention of repeat evaluations, for

example, if samples are bulky, leave an aftertaste, or show slight differences in appearance that cannot be masked, present the

samples monadically (or sequential monadic) and do not allow repeated evaluations.

9.4 Ask only one question about the samples. The selection the assessor has made on the initial question may bias the reply to

subsequent questions about the samples. Responses to additional questions may be obtained through separate tests for preference,

acceptance, degree of difference, etc. See Chambers and Baker Wolf (9). A section soliciting comments may be included following

the initial forced-choice question.

9.5 The directional difference test is a forced-choice procedure; assessors are not allowed the option of reporting “no

difference.” An assessor who detects no difference between the samples should be instructed to make a guess and select one of

the samples, and can indicate in the comments section that the selection was only a guess.

E2164 − 16

TABLE 2 Number of Assessors Needed for a Directional Difference Test Two-Sided Alternative

NOTE 1—The values recorded in this table have been rounded to the nearest whole number evenly divisible by two to allow for equal presentation of

both pair combinations (AB and BA).

NOTE 2—Adapted from Meilgaard et al (8).

β

α 0.50 0.40 0.30 0.20 0.10 0.05 0.01 0.001

0.50 P =75 % 2 6 8 12 16 24 34 52

max

0.40 6 6 10 12 20 26 40 58

0.30 6 8 12 16 22 30 42 64

0.20 10 10 14 20 26 34 48 70

0.10 14 16 18 24 34 42 58 82

0.05 18 20 26 30 42 50 68 92

0.01 26 34 40 44 58 66 88 118

0.001 42 50 58 66 78 90 118 150

0.50 P =70 % 6 8 12 16 26 34 54 86

max

0.40 6 10 12 20 30 40 60 94

0.30 8 14 18 22 34 44 68 102

0.20 14 20 22 28 40 54 80 114

0.10 18 24 30 38 54 68 94 132

0.05 26 36 40 50 66 80 110 150

0.01 44 50 60 74 92 108 144 192

0.001 68 78 90 102 126 148 188 240

0.50 P =65 % 8 14 18 30 44 64 98 156

max

0.40 10 18 22 32 50 68 110 166

0.30 14 20 30 42 60 82 126 188

0.20 22 28 38 54 72 96 146 208

0.10 30 42 54 70 94 120 174 244

0.05 44 56 68 90 114 146 200 276

0.01 74 92 108 132 164 196 262 346

0.001 122 140 162 188 230 268 342 440

0.50 P =60 % 16 28 36 64 98 136 230 352

max

0.40 22 32 50 78 112 158 254 384

0.30 32 44 66 90 134 180 284 426

0.20 46 66 86 116 168 214 322 472

0.10 72 120 158 214 268 392 554

0.05 102 126 158 200 264 328 456 636

0.01 172 204 242 292 374 446 596 796

0.001 276 318 364 426 520 604 782 1010

0.50 P =55 % 50 96 156 240 394 544 910 1424

max

0.40 82 130 194 294 452 618 1006 1556

0.30 110 174 254 360 550 722 1130 1702

0.20 170 240 338 462 658 862 1310 1906

0.10 282 370 476 620 866 1092 1584 2238

0.05 390 498 620 786 1056 1302 1834 2544

0.01 670 802 964 1168 1494 1782 2408 3204

0.001 1090 1260 1462 1708 2094 2440 3152 4064

10. Analysis and Interpretation of Results

10.1 The procedure used to analyze the results of a directional difference test depends on the number of assessors.

10.1.1 If the number of assessors is equal to or greater than the value given in Table 1 (for a one-sided alternative) or Table 2

(for a two-sided alternative) for the chosen values of α, β, and P , then use Table 3 to analyze the data obtained from a one-sided

max

test and Table 4 to analyze the data from a two-sided test. If the number of common responses is equal to or greater than the number

given in

...

Questions, Comments and Discussion

Ask us and Technical Secretary will try to provide an answer. You can facilitate discussion about the standard in here.

Loading comments...