prEN 18281

(Main)Artificial Intelligence - Evaluation methods for accurate computer vision systems

Artificial Intelligence - Evaluation methods for accurate computer vision systems

This document specifies the evaluation of computer vision systems, in the sense of measuring the quality of a system’s results to

assess its functional suitability. It provides a definition of evaluation methods for those systems, together with guidance on how to

select, implement and interpret those evaluation methods. This document covers quantitative metrics as well as other evaluation

methods. It includes requirements on the implementation of the described metrics, and further requirements on the technical

resources involved in the evaluation process.

KI-Aufgaben und Bewertungsmethoden für Computer-Vision-Systeme

Umetna inteligenca - Metode vrednotenja za natančne sisteme računalniškega vida

Ta dokument določa vrednotenje sistemov računalniškega vida v smislu merjenja kakovosti rezultatov sistema za oceno njegove funkcionalne primernosti. Ponuja definicijo metod vrednotenja za te sisteme, skupaj s smernicami za izbiro, izvajanje in interpretacijo teh metod vrednotenja. Ta dokument zajema kvantitativne metrike kot tudi druge metode vrednotenja. Vključuje zahteve glede izvajanja opisanih metrik in nadaljnje zahteve glede tehničnih virov, vključenih v proces vrednotenja.

General Information

- Status

- Not Published

- Publication Date

- 03-Mar-2027

- Technical Committee

- CEN/CLC/JTC 21 - Artificial Intelligence

- Drafting Committee

- CEN/CLC/JTC 21/WG 3 - Engineering aspects

- Current Stage

- 4020 - Submission to enquiry - Enquiry

- Start Date

- 19-Mar-2026

- Due Date

- 15-Oct-2025

- Completion Date

- 19-Mar-2026

- Directive

- Not Harmonized2024/1689 - EU AI Act

Relations

- Effective Date

- 28-Jan-2026

Overview

prEN 18281: Artificial Intelligence - Evaluation Methods for Accurate Computer Vision Systems is a draft European Standard prepared by CEN (European Committee for Standardization). It sets out methods and requirements for evaluating the quality and functional suitability of computer vision systems in artificial intelligence (AI). The standard addresses a broad spectrum of evaluation needs, including both quantitative and qualitative approaches, to ensure accurate assessment of computer vision systems used in diverse real-world applications.

The document guides organizations in defining, selecting, implementing, and interpreting evaluation methods for computer vision models, emphasizing reproducibility, comparability, and appropriate use of metrics. It also outlines technical resource requirements for effective evaluation.

Key Topics

Scope and Purpose

- Specifies how to measure computer vision system results for functional suitability.

- Covers quantitative metrics (e.g., accuracy, precision, recall, F1 score) and other evaluation methods.

- Offers guidance on selecting appropriate metrics based on specific tasks such as image analysis, object detection, segmentation, and generative tasks.

Categories of Evaluation Methods

- Ground-Truth–Based Evaluation: Compares outputs directly with labelled datasets (typical for detection and segmentation).

- Generative Evaluation: For AI tasks with no unique correct answer, such as image synthesis or super-resolution.

- Semantic Evaluation: Evaluates higher-level reasoning tasks (e.g., image captioning, visual question answering).

Basic Evaluation Concepts

- Intrinsic vs. Extrinsic Evaluation: Measures model performance using internal benchmarks or broader real-world impacts.

- Component vs. System Evaluation: Distinguishes between the evaluation of specific modules and end-to-end system performance.

- Quantitative vs. Qualitative Methods: Combines numerical indicators with qualitative, sometimes human-assessed, performance aspects.

Requirements on Metrics Implementation

- Stress on reproducibility and comparability.

- Handling of class imbalance and conditions like division by zero.

- Full transparency in how metrics are calculated and reported.

Applications

The evaluation methods defined in prEN 18281 are applicable across a wide range of industries and use cases where computer vision forms a critical AI component. Practical applications include:

- Industrial Automation: Quality inspection, defect detection, and robotic guidance rely on accurate object detection and segmentation metrics.

- Healthcare: Medical imaging and diagnostic AI benefit from robust segmentation and similarity measures, ensuring clinical reliability.

- Autonomous Systems: Object detection, localization, and tracking are central for autonomous vehicles and drones, requiring consistent and reliable evaluation to ensure safety.

- Security and Surveillance: Face and activity recognition systems need task-specific evaluation metrics for accuracy and fairness.

- Media and Entertainment: Image and video enhancement, generation, and editing tools use generative evaluation metrics to monitor output fidelity and realism.

- Retail and Logistics: Automated checkout, inventory management, and supply chain optimization depend on the effective evaluation of vision-based recognition systems.

By applying prEN 18281, organizations ensure:

- Consistency and comparability in system performance reporting.

- Selection of metrics that truly reflect the intended application and operational conditions.

- Adherence to requirements that support interoperability and regulatory compliance.

Related Standards

This standard complements several established documents and is designed to be used alongside:

- ISO/IEC TS 4213: Assessment of machine learning classification performance, underpinning key metrics for functional AI evaluation.

- prEN 18288: Provides task definitions linked to the evaluation methods described here.

- EN ISO/IEC 23282: Focuses on evaluation in natural language processing (NLP), relevant for multi-modal AI systems.

- prEN 18284: Addresses dataset requirements and guidelines for computer vision evaluation.

- ISO/IEC 2382: Standardizes terminology on computer and artificial vision.

Implementing prEN 18281 provides a strong foundation for reliable, transparent, and standardized computer vision evaluation across the AI landscape, supporting innovation and trust in digital technologies.

Get Certified

Connect with accredited certification bodies for this standard

BSI Group

BSI (British Standards Institution) is the business standards company that helps organizations make excellence a habit.

NYCE

Mexican standards and certification body.

Sponsored listings

Frequently Asked Questions

prEN 18281 is a draft published by the European Committee for Standardization (CEN). Its full title is "Artificial Intelligence - Evaluation methods for accurate computer vision systems". This standard covers: This document specifies the evaluation of computer vision systems, in the sense of measuring the quality of a system’s results to assess its functional suitability. It provides a definition of evaluation methods for those systems, together with guidance on how to select, implement and interpret those evaluation methods. This document covers quantitative metrics as well as other evaluation methods. It includes requirements on the implementation of the described metrics, and further requirements on the technical resources involved in the evaluation process.

This document specifies the evaluation of computer vision systems, in the sense of measuring the quality of a system’s results to assess its functional suitability. It provides a definition of evaluation methods for those systems, together with guidance on how to select, implement and interpret those evaluation methods. This document covers quantitative metrics as well as other evaluation methods. It includes requirements on the implementation of the described metrics, and further requirements on the technical resources involved in the evaluation process.

prEN 18281 is classified under the following ICS (International Classification for Standards) categories: 35.020 - Information technology (IT) in general; 35.240.01 - Application of information technology in general. The ICS classification helps identify the subject area and facilitates finding related standards.

prEN 18281 has the following relationships with other standards: It is inter standard links to EN ISO 25649-1:2024. Understanding these relationships helps ensure you are using the most current and applicable version of the standard.

prEN 18281 is associated with the following European legislation: EU Directives/Regulations: 2024/1689; Standardization Mandates: M/593, M/613. When a standard is cited in the Official Journal of the European Union, products manufactured in conformity with it benefit from a presumption of conformity with the essential requirements of the corresponding EU directive or regulation.

prEN 18281 is available in PDF format for immediate download after purchase. The document can be added to your cart and obtained through the secure checkout process. Digital delivery ensures instant access to the complete standard document.

Standards Content (Sample)

SLOVENSKI STANDARD

01-maj-2026

Umetna inteligenca - Metode vrednotenja za natančne sisteme računalniškega vida

Artificial Intelligence - Evaluation methods for accurate computer vision systems

KI-Aufgaben und Bewertungsmethoden für Computer-Vision-Systeme

Ta slovenski standard je istoveten z: prEN 18281

ICS:

35.020 Informacijska tehnika in Information technology (IT) in

tehnologija na splošno general

35.240.01 Uporabniške rešitve Application of information

informacijske tehnike in technology in general

tehnologije na splošno

2003-01.Slovenski inštitut za standardizacijo. Razmnoževanje celote ali delov tega standarda ni dovoljeno.

EUROPEAN STANDARD DRAFT

NORME EUROPÉENNE

EUROPÄISCHE NORM

March 2026

ICS 35.240.01

English version

Artificial Intelligence - Evaluation methods for accurate

computer vision systems

KI-Aufgaben und Bewertungsmethoden für Computer-

Vision-Systeme

This draft European Standard is submitted to CEN members for enquiry. It has been drawn up by the Technical Committee

CEN/CLC/JTC 21.

If this draft becomes a European Standard, CEN and CENELEC members are bound to comply with the CEN/CENELEC Internal

Regulations which stipulate the conditions for giving this European Standard the status of a national standard without any

alteration.

This draft European Standard was established by CEN and CENELEC in three official versions (English, French, German). A

version in any other language made by translation under the responsibility of a CEN and CENELEC member into its own language

and notified to the CEN-CENELEC Management Centre has the same status as the official versions.

CEN and CENELEC members are the national standards bodies and national electrotechnical committees of Austria, Belgium,

Bulgaria, Croatia, Cyprus, Czech Republic, Denmark, Estonia, Finland, France, Germany, Greece, Hungary, Iceland, Ireland, Italy,

Latvia, Lithuania, Luxembourg, Malta, Netherlands, Norway, Poland, Portugal, Republic of North Macedonia, Romania, Serbia,

Slovakia, Slovenia, Spain, Sweden, Switzerland, Türkiye and United Kingdom.

Recipients of this draft are invited to submit, with their comments, notification of any relevant patent rights of which they are

aware and to provide supporting documentation.Recipients of this draft are invited to submit, with their comments, notification

of any relevant patent rights of which they are aware and to provide supporting documentation.

Warning : This document is not a European Standard. It is distributed for review and comments. It is subject to change without

notice and shall not be referred to as a European Standard.

CEN-CENELEC Management Centre:

Rue de la Science 23, B-1040 Brussels

© 2026 CEN/CENELEC All rights of exploitation in any form and by any means

Ref. No. prEN 18281:2026 E

reserved worldwide for CEN national Members and for

CENELEC Members.

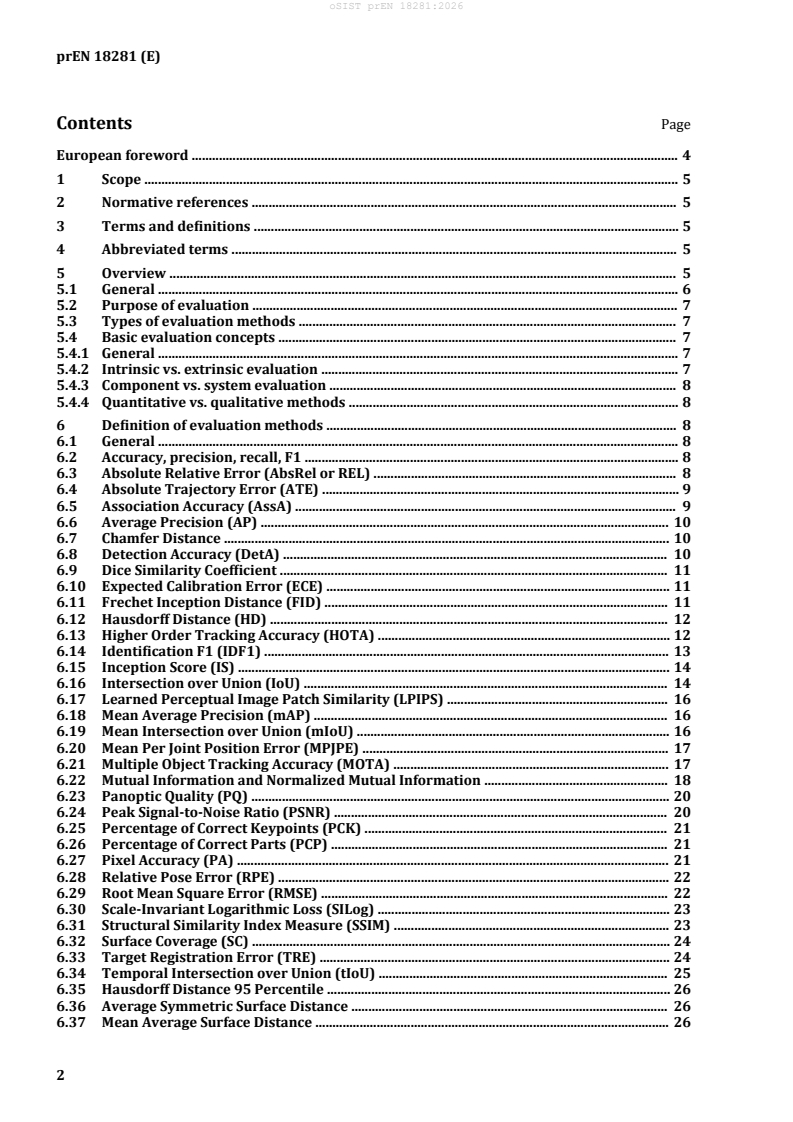

Contents Page

European foreword . 4

1 Scope . 5

2 Normative references . 5

3 Terms and definitions . 5

4 Abbreviated terms . 5

5 Overview . 5

5.1 General . 6

5.2 Purpose of evaluation . 7

5.3 Types of evaluation methods . 7

5.4 Basic evaluation concepts . 7

5.4.1 General . 7

5.4.2 Intrinsic vs. extrinsic evaluation . 7

5.4.3 Component vs. system evaluation . 8

5.4.4 Quantitative vs. qualitative methods . 8

6 Definition of evaluation methods . 8

6.1 General . 8

6.2 Accuracy, precision, recall, F1 . 8

6.3 Absolute Relative Error (AbsRel or REL) . 8

6.4 Absolute Trajectory Error (ATE) . 9

6.5 Association Accuracy (AssA) . 9

6.6 Average Precision (AP) . 10

6.7 Chamfer Distance . 10

6.8 Detection Accuracy (DetA) . 10

6.9 Dice Similarity Coefficient . 11

6.10 Expected Calibration Error (ECE) . 11

6.11 Frechet Inception Distance (FID) . 11

6.12 Hausdorff Distance (HD) . 12

6.13 Higher Order Tracking Accuracy (HOTA) . 12

6.14 Identification F1 (IDF1) . 13

6.15 Inception Score (IS) . 14

6.16 Intersection over Union (IoU) . 14

6.17 Learned Perceptual Image Patch Similarity (LPIPS) . 16

6.18 Mean Average Precision (mAP) . 16

6.19 Mean Intersection over Union (mIoU) . 16

6.20 Mean Per Joint Position Error (MPJPE) . 17

6.21 Multiple Object Tracking Accuracy (MOTA) . 17

6.22 Mutual Information and Normalized Mutual Information . 18

6.23 Panoptic Quality (PQ) . 20

6.24 Peak Signal-to-Noise Ratio (PSNR) . 20

6.25 Percentage of Correct Keypoints (PCK) . 21

6.26 Percentage of Correct Parts (PCP) . 21

6.27 Pixel Accuracy (PA) . 21

6.28 Relative Pose Error (RPE) . 22

6.29 Root Mean Square Error (RMSE) . 22

6.30 Scale-Invariant Logarithmic Loss (SILog) . 23

6.31 Structural Similarity Index Measure (SSIM) . 23

6.32 Surface Coverage (SC) . 24

6.33 Target Registration Error (TRE) . 24

6.34 Temporal Intersection over Union (tIoU) . 25

6.35 Hausdorff Distance 95 Percentile . 26

6.36 Average Symmetric Surface Distance . 26

6.37 Mean Average Surface Distance . 26

6.38 Mean Squared Error (MSE) . 26

6.39 Mean Absolute Error (MAE) . 27

6.40 Pearson Correlation Coefficient (PCC) . 27

7 Evaluation methods per task . 27

7.1 General . 27

7.2 Image analysis . 28

7.2.1 General . 28

7.2.2 Object localization . 29

7.2.3 Object classification . 29

7.2.4 Object detection . 30

7.2.5 Semantic segmentation . 32

7.2.6 Instance segmentation . 33

7.2.7 Panoptic Segmentation . 34

7.2.8 Image registration . 35

7.2.9 Image similarity . 39

7.3 Spatial analysis . 40

7.3.1 General . 40

7.3.2 Depth estimation . 40

7.3.3 Object / 3D reconstruction . 40

7.3.4 Object pose estimation . 43

7.4 Temporal analysis . 44

7.4.1 General . 44

7.4.2 Multiple object tracking . 44

7.4.3 Action recognition . 48

7.4.4 Event detection . 51

7.5 Tasks producing image as an output . 52

7.5.1 General . 52

7.5.2 Image generation . 52

7.6 Tasks producing video as an output . 53

8 Requirements on resources . 53

Annex A (informative) Regularity Measures for Image Transformations . 54

Bibliography . 56

European foreword

This document (prEN 18281:2026) has been prepared by Technical Committee CEN/CENELEC JTC

21"Artificial Intelligence", the secretariat of which is held by DS.

This document is currently submitted to the CEN Enquiry.

This document has been prepared under a standardization request addressed to CEN by the European

Commission. The Standing Committee of the EFTA States subsequently approves these requests for its

Member States.

1 Scope

This document specifies the evaluation of computer vision systems, in the sense of measuring the quality

of a system’s results to assess its functional suitability. It provides a definition of evaluation methods

for those systems, together with guidance on how to select, implement and interpret those evaluation

methods. This document covers quantitative metrics as well as other evaluation methods. It includes

requirements on the implementation of the described metrics, and further requirements on the

technical resources involved in the evaluation process.

The accuracy and functional correctness metrics defined in this document provide point-in-time

measurements under defined test conditions. These metrics can also be used as inputs to broader

assessments of system robustness, as defined in prEN 18229-2. However, these metrics do not, on their

own, guarantee stable performance across all deployment contexts or operating conditions. The

selection, weighting, and interpretation of metrics are therefore also informed by the intended

deployment context and the potential consequences of error.

2 Normative references

The following documents are referred to in the text in such a way that some or all of their content

constitutes requirements of this document. For dated references, only the edition cited applies. For

undated references, the latest edition of the referenced document (including any amendments) applies.

ISO/IEC TS 4213:2022, Information technology — Artificial intelligence — Assessment of machine

learning classification performance

3 Terms and definitions

For the purposes of this document, the terms and definitions given in the following apply.

ISO and IEC maintain terminological databases for use in standardization at the following addresses:

— IEC Electropedia: available at http://www.electropedia.org/

— ISO Online browsing platform: available at http://www.iso.org/obp

3.1

computer vision

artificial vision capability of a functional unit to acquire, process, and interpret visual data

Note 1 to entry: Computer vision involves the use of visual sensors to create an electronic or digital image of a

visual scene.

Note 2 to entry: Not to be confused with machine vision.

Note 3 to entry: Computer vision; artificial vision: terms and definition standardized by ISO/IEC [ISO/IEC

2382-28:1995].

[SOURCE: ISO/IEC 2382:2015 [1]]

4 Abbreviated terms

AI Artificial Intelligence

GT Ground Truth

5 Overview

5.1 General

Compared to evaluation practices for other AI systems, computer vision evaluation has its own

specificities. While the main principles and caveats remain, the concrete practices, and in particular the

evaluation methods themselves, can be substantially affected in computer vision, both due to the

inherent constraints and variability associated with processing visual content (images, videos…) and

to existing practices by computer vision practitioners.

This document approaches the specificities of computer vision evaluation from the angle of tasks. The

task is a suitable way to formalize the processing done by the AI system, at a level of abstraction that

enables to capture the methodological specificities of certain types of processing, while not delving into

the specific considerations of the use case and the exact data that is being processed.

EXAMPLE AI systems performing a classification task can be evaluated through metrics like precision, recall

or F1, as specified in ISO/IEC 4213. This is, however, not applicable to image inpainting or image super-resolution

and different evaluation methods are used for such systems. Object detection cases fall somewhere in between,

as metrics like precision, recall, and F1 score are still relevant but are applied differently compared to classification.

It is important to clarify this by outlining the process for matching predicted bounding boxes with ground truth

boxes before calculating the F1 score.

This document is therefore to be read and used in conjunction with prEN 18288. The description of the

various tasks addressed in this document can be found in prEN 18288, and this document focuses on

which evaluation methods can be applied to each task and how.

This document is complementary to ISO/IEC 4213 which addresses evaluation methods for

classification, regression, clustering and recommendation, and to EN ISO/IEC 23282 which cover the

NLP evaluation methods in a similar way. Evaluation methods from ISO/IEC 4213 are built upon by

some evaluation methods in this document, and evaluation methods from EN ISO/IEC 23282 are

typically combined with the present ones, either in the context of multi-modal processing (for which

evaluating the processing on both aspects is warranted) or due to the joint presence of computer vision

components and NLP components within the same AI system.

Clause 6 describes a set of evaluation methods used in computer vision, including their mathematical

definition and an indication of any aspect of the metric computation for which reporting is needed to

ensure comparability and reproducibility across different implementations of the same metric. Only

automated evaluation methods are handled in this document.

Clause 7 then relates each computer vision task with these evaluation methods, providing task-specific

considerations for the methodology to apply these to the particular task, complemented with further

specifications (both in terms of application and practical implementation) meant to ensure the

reproducibility of the evaluation in the context of that task, as well as additional informative material

to calibrate the typical ranges of values obtained by this evaluation method and also indicating the

aspects of the task evaluated by that method in order to guide the interpretation of results.

Depending on the task and the evaluation method, the provided specifications differ:

— For tasks and evaluation methods that are a direct application of practices not specific to computer

vision (e.g. usage of F1 for object classification), this document serves as an entry point to identify

the relevant applicable standards for evaluating these tasks.

— For tasks and evaluation methods that are a computer vision-specific application of practices that

exist in another form out of computer vision (e.g. usage of F1 for object detection), this document

builds on existing standards to complement their specifications with the additional ones that apply

in the case of computer vision (e.g. procedure for matching bounding boxes).

— For tasks that have their own computer vision-specific evaluation methods, this document provides

a full specification of the evaluation methods as well as their usage for these tasks.

Finally, Clause 8 complements the specifications on the evaluation methods themselves and their

application to tasks, with further specifications on the technical resources needed for applying

evaluation methods to computer vision systems. This includes, for instance, the consideration of the

test data size or diversity.

5.2 Purpose of evaluation

The primary objective of computer vision model evaluation is to quantify their performance

reproducibly, and to ensure that the systems operate as intended in real-world scenarios.

There are no <> metrics to evaluate AI computer vision algorithm and the right metric

depends on the task’s purpose and what constitutes “success”. For example,

— For detection, success means finding what and where things are.

— For generation, success means producing realistic and diverse visual content.

Using a single universal metric would therefore give misleading or even meaningless results. Therefore

having a clear understanding of a computer vision accuracy metrics and its pitfalls is key to select the

appropriate metrics to measure the performance of a model.

The reliability of evaluation results depends on the characteristics of the evaluation dataset. Evaluation

datasets should be statistically representative of real-world operating conditions, exhibit appropriate

class and condition balance, and employ consistent and validated labelling and annotation techniques.

Further information on datasets can be found in prEN 18284.

5.3 Types of evaluation methods

The evaluation of computer vision models relies on a diverse set of methods and metrics, the choice of

which is intrinsically linked to the nature of the task to be performed (classification, detection,

segmentation, etc.) and to the specific characteristics of the problem (class imbalance, boundary

importance, etc.).

Three main categories of evaluation methods can be distinguished:

a) Ground-Truth–Based Evaluation. This category applies to tasks where an objective, well-defined

reference exists and where the system output can be directly compared to a labelled dataset. Typical

examples include object detection, image segmentation or pose estimation. Ground-Truth–Based

evaluations require precise alignment of spatial coordinates and label consistency.

b) Generative Evaluation. This category applies to tasks that do not have a single correct answer,

such as image generation, style transfer, super-resolution, or inpainting. Since the output space is

inherently multimodal, no unique ground truth can be defined. Generative Evaluation-Based

metrics estimate the alignment between the distribution of generated images and that of real-world

samples rather than pixel-wise correctness.

c) Semantic Evaluation. This category applies to tasks that involve high-level reasoning or

multimodal understanding, such as image captioning, visual question answering (VQA), or scene

graph generation. Evaluation depends on the semantic equivalence between the predicted

description and the reference. Multiple acceptable outputs can exist, and evaluation needs to

account for linguistic variability, contextual relevance, and factual consistency.

5.4 Basic evaluation concepts

5.4.1 General

The evaluation of computer vision tasks is a rich field that relies on several fundamental concepts

enabling the objective assessment of a model’s quality and performance for a given application.

5.4.2 Intrinsic vs. extrinsic evaluation

Intrinsic evaluation measures a computer vision model’s performance using predefined metrics (e.g.

accuracy) on labelled data, focusing on how well it performs the task itself.

Extrinsic evaluation assesses the model’s impact on an application (e.g. improved safety in autonomous

driving), focusing on real-world usefulness rather than internal performance.

5.4.3 Component vs. system evaluation

Component evaluation measures the performance of an individual computer vision module (e.g. object

detection accuracy or segmentation quality).

System evaluation assesses the end-to-end performance of the complete application that integrates

multiple components, focusing on overall functionality, reliability, and real-world outcomes.

5.4.4 Quantitative vs. qualitative methods

Quantitative methods use objective, numerical metrics (e.g. precision, recall) to measure a computer

vision model’s performance.

Qualitative methods rely on human judgment or visual inspection (e.g. perceptual realism,

interpretability) to assess how convincing or meaningful the output of the computer vision algorithm is.

6 Definition of evaluation methods

6.1 General

Evaluation methods in computer vision encompass both quantitative and qualitative approaches,

applied at the component or system level, and assessed through intrinsic or extrinsic evaluations.

They ensure that models are measured not only by numerical accuracy on benchmark data, but also by

their overall performance.

Certain metrics are sensitive to class or instance imbalance in evaluation datasets. Where imbalance is

present, users shall implement macro-averaging, Balanced Accuracy, weighted variants or other

established mitigation approaches.

Implementations of metrics shall guard against numerical conditions that produce ündefined or

misleading results. These conditions include, but are not limited to, division by zero, logarithm of zero

or negative values and computation over an empty valid sample set.

The computation of every quantitative metric shall be fully reproducible.

NOTE The evaluation methods in clause 6 are organized in alphabetical order to facilitate the reading. It is

planned to reorganize the evaluation methods in a more meaningful manner in a further version of the document.

6.2 Accuracy, precision, recall, F1

For accuracy, ISO/IEC 4213:—, 6.2.3 applies.

For precision, ISO/IEC 4213:—, 6.2.4 applies.

For recall, ISO/IEC 4213:—, 6.2.4 applies.

For F1, ISO/IEC 4213:—, 6.2.5 applies.

For Jaccard Index, ISO/IEC 4213:—, 6.5.4 applies.

6.3 Absolute Relative Error (AbsRel or REL)

Absolute Relative Error normalizes the error for each pixel compared to the ground truth. In contrast

to RMSE (6.29) , this metric is less sensitive to large absolute errors at long range. Absolute Relative

Error score shall be computed according to Formula (1):

N

y −y

i i

AbsRel = (1)

y

N

i

i = 1

y y

where is the ground truth value, is the predicted value at pixel i. Lower values of AbsRelrerpesent

i i

more accurate predictions. This metric is appropriate where the tolerance for variations is higher at far

ranges than at close ranges.

6.4 Absolute Trajectory Error (ATE)

The metric reflects the correctness of an estimated trajectory relative to a reference trajectory. The

metric is calculated by first aligning the estimated trajectory to the reference trajectory thereby

minimizing the quadratic position error between corresponding trajectory points. The is then

ATE

defined as the quadratic mean of the Euclidean distances between the adjusted estimated positions and

the reference positions in dependence of time and/or space.

Absolute Trajectory Error score shall be computed according to Formula (2):

N

1 2

ATE = p − Rp +t (2)

i i

N

i = 1

Where:

— is the total number of trajectory points (e.g. camera poses, 2D or 3D positions).

N

— p

is the Ground Truth position of the ith camera or object, expressed as a 2D or 3D vector.

i

—

i is the predicted position of the ith camera or object, before alignment.

p

i

n

—

is the rotation matrix, is the n-D rotation group, and is the n-D translation

R∈ SO n SO n

t∈ ℝ

vector, where n ∈ [2,3], both obtained from rigid-body alignment (e.g. via Horn’s method or

Umeyama algorithm). These align the predicted trajectory to the ground truth to remove global

drift.

6.5 Association Accuracy (AssA)

Association Accuracy (AssA) focuses on assessing how well an object tracking system correctly

associates the same identities, given the known associations and identities of a sequence. This is done

by comparing the alignment between the detected objects and their associated track to the matching

ground-truth tracks.

AssA score shall be computed according to the following Formula (3):

TPA c

AssA =

(3)

TP TPA c + FNA c + FPA c

c∈ TP

Where the set of matched ground-truth and predicted trajectories (denoted as ) , denotes

TP c TPA

objects correctly associated with their respective ground-truth trajectory, FNA denotes ground-truth

tracks that exist but fail to maintain the correct identity, and denotes trajectories where a predicted

FPA

trajectory maintains the wrong ID.

AssA focuses on evaluating the accuracy of object-to-track associations across the entire sequence. It

measures how well a tracker preserves identity links over time, accounting for both switches and

fragmentation. AssA' sensitivity to track fragmentation makes it particularly applicable in scenarios

where maintaining consistent trajectories is crucial. By emphasizing long-term association quality

rather than frame-level detection, AssA complements detection-focused metrics and provides a more

comprehensive evaluation of a system’s ability to maintain consistent object identities across time,

especially in scenarios involving occlusions.

6.6 Average Precision (AP)

The Average Precision (AP) is the weighted sum of precision values at multiple thresholds, with the

th

th th

weight for the precision value being the increase in recall from the threshold to the

n n

n−1

threshold. AP summarizes the Precision- Recall curve but is distinct from calculating the area under the

Precision-Recall curve using e.g. the trapezoid rule.

Average precision is calculated as:

AP = R − R P

n n−1 n

(4)

n

th

where R and P are the recall and precision at the threshold respectively.

n n n

NOTE This metric could potentially be included in the new version of ISO/IEC 4213.

6.7 Chamfer Distance

Chamfer distance measures the average squared distance between the points in one point cloud to their

nearest neighbours in the other point cloud, and vice versa. It is calculated using the following Formula

(5):

1 1 2

CD A,B = min ∥ a−b∥ + min ∥ a−b∥

(5)

b∈ B a∈ A

A B

a∈ A b∈ B

where A and B are two sets of points with their cardinalities A and B , respectively.

Parameters:

— : Set of points from the reconstructed model.

A

— : Set of points from the reference model.

B

— , : Number of points in and , respectively.

A B A B

Calculation:

— For each point in , find the closest point in and compute .

A B

— For each point in , find the closest point in and compute .

B A

— Chamfer Distance shall be computed according to the formula given in Formula (5).

6.8 Detection Accuracy (DetA)

Detection Accuracy (DetA) focuses on evaluating how well the tracking system localizes objects,

regardless of their identity assignment. This is done by comparing the detected objects to all of the

known ground-truth objects.

Detection Accuracy score shall be computed according to the Formula (6):

TP

DetA = (6)

α

TP + FN + FP

Where denotes the IoU (6.16 ) matching threshold, denotes the number of detections that match

α TP

the ground truth, FN denotes the number objects that are present in the ground-truth but were missed,

and denotes the number of objects that were detected but do not match any ground-truth object.

FP

The IoU threshold α determines whether a predicted bounding box has süfficient overlap with a given

ground truth bounding box to be considered a match. Therefore, the selected threshold (typically

), determines how accurately the predicted boundingbox fits to the object.

α = 0.5

DetA is particularly useful for scenarios where reliable object localization is crucial (e.g. detecting all

pedestrians in autonomous driving). By isolating detection accuracy from association performance,

DetA provides a complementary perspective on tracker performance.

6.9 Dice Similarity Coefficient

The Dice Similarity Coefficient (DSC), also known as the Dice-Sørensen Coefficient or SørensenDice

Index is an overlap metric similar to the Jaccard Index. Given two sets and , DSC is defined as:

A B

2 A ∩ B

DSC = (7)

A + B

6.10 Expected Calibration Error (ECE)

Expected Calibration Error (ECE) is a quantitative metric that measures the difference between a

model's confidence and its accuracy. It is calculated by dividing the model's predictions into a number

of equally-spaced bins based on confidence and then computing a weighted average of the absolute

difference between the accuracy and confidence for each bin.

The ECE score shall be computed according to Formula (8):

B

M m

(8)

ECE = ∑ acc B −conf B

m = 1 m m

n

Where:

— — is the number of bins.

M

— B

is the number of predictions in bin m.

m

— is the total number of data points.

n

— acc B is the accuracy in bin .

m

m

— conf B is the average confidence in bin .

m

m

NOTE The number of bins used to calculate this metric shall be stated when using this metric.

M

6.11 Frechet Inception Distance (FID)

Frechet Inception Distance (FID) is a metric used to assess the difference between two distributions. It

is commonly used for several tasks such as image generation quality assessment and domain gap

evaluation. It is a combination of Frechet distance and Inception V3 model [2]

The FID shall be computed according to Formula (9):

1/2

(9)

FID = ∥ μ − μ ∥ + Tr Σ + Σ − Σ Σ

1 2 1 2 1 2

Where μ and Σ are the mean and covariance matrix of the first feature multivariate normal distribution

1 1

and μ and Σ are the mean vector and the covariance matrix of the second feature multivariate normal

2 2

distribution.

The distance is divided into two steps:

a) Feature extractor based on pre-trained models, often Inception V3 is used, where for each image

a vector of features is extracted form its layers. This extraction occurs generally the 3rd pool layer.

b) Fre�chet Distance computes the metric based on extracted features distribution in (a) following

Formula (9).

NOTE 1 FID metric is usually used in case of image input for image generation model evaluation. Other variant

of audio and video inputs for video and audio generation models are also developed under FVD (Fre�chet Video

Distance) and FAD (Fre�chet Audio Distance) names, respectively. This is an adaption of FID by modifying only the

model extractor for feature given in (a).

FID is usually useful for image generation evaluation, during training of e.g. models with GAN

architectures and monitoring such as domain gap assessment.

The interpretation of FID score between two distributions:

— A lower FID score indicates a higher degree of similarity between the two distibutions. In case of

FID=0, then the two distributions are identical.

— As the discrepancy between the two distributions distributions increases the FID score also

increases.

NOTE 2 In case of generative models, such as GAN, the two distributions are extracted from real and generated

images, respectively.

NOTE 3 In case of domain gap assessment, the two distributions are extracted from source (usually training

dataset) and target dataset (usually operational dataset), respectively

6.12 Hausdorff Distance (HD)

The Hausdorff Distance (HD) d X,Y is defined over two non-empty subsets X and Y of a metric space

H

, where is a set and is a metric on . HD is defined as:

M,d M d M

d X,Y = max sup d x,Y , sup d y,X (10)

H x∈ X y∈ Y

where sup is the supremum operator (also known as the least upper bound), and the distance d x,Y

between element and set is defined as :

x∈ X Y

d x,Y = inf d x,y (11)

y∈ Y

where inf is the infimüm operator (also known as greatest lower bound). Distance d y,X is defined

similarly to .

d x,Y

NOTE 1 HD is symmetric, i.e d X,Y = d Y,X

H H

NOTE 2 When applied to the points, pixels, or voxels making up the boundaries of regions, HD is also known

as Maximum Symmetric Surface Distance (MSSD)

If the HD is a given value then every point in each set is within that distance of some point of the other

set. Thus HD is sensitive to outliers, but is useful for giving a worst-case measure of how far apart two

sets are.

6.13 Higher Order Tracking Accuracy (HOTA)

The Higher Order Tracking Accuracy (HOTA) qüantifies the overall performance of an object tracking

system by assessing the accurate position of many objects among successive video frames. It provides

a quantitative measure that combines X key types of errors into a single figüre:

a) Detection Errors: ratio of objects present in the ground truth that were not detected (recall) and

the rate of detection reflecting the objects in the ground truth (precision).

b) Association Errors: ratio of correctly associated objects throughout the entire input sequence

compared to total associations of the entire input sequence.

HOTA score shall be computed according to Formula (12):

∑ A c

c∈ TP

(12)

HOTA =

α

TP + FN + FP

Where:

— α denotes the localization threshold for accepting detection as a true positive,

— TP , FN and FP denote the sum of objects that are correctly detected, incorrectly detected and

missed respectively.

TPA c

—

A c =

TPA c + FNA c + FPA c

TPA c

A c = (13)

TPA c + FNA c + FPA c

Where:

— c denotes a given object id,

— TPA c , FNA c and FPA c denote correctly associated, incorrectly associated and missed

associations for all instances of a given object.

NOTE This is computed for the entire input sequence.

HOTA leverages the Hungarian algorithm to perform matching which requires a manually selected

threshold for the maximum allowed cost to accept a given association. The value of this threshold shall

be stated when reporting this metric.

6.14 Identification F1 (IDF1)

Identification F1 (IDF1) focuses on assessing how long a given tracker is correctly identifying an object.

Rather than just accumulating frame-by-frame error it calculates a joint metric for all frames in one go.

Thus it heavily focuses on consistent identity association throughout. Thus, this metric suffers less from

the number of objects in a given video. This is done by employing Identity Precision (IDP) and Identity

Recall (IDR), where IDP computes how many of the detections are associated correctly, and IDR

computes how many detections are correctly associated concerning all relevant objects.

As it is identity-focused the two types of errors in the tracking systems that IDF1 measures are:

a) Improper associations: Objects that are detected and associated in a manner that does not match

the ground truth.

b) Missed trajectories: Trajectories that have not been tracked but are present in the ground truth.

IDF1 score shall be computed according to Formula (14):

IDTP

IDF1 = (14)

IDTP +0.5 IDFN +0.5 IDFP

where,

— denotes objects that are correctly detected and associated,

IDTP

— denotes objects that have been associated but are not present in the ground truth,

IDFP

— IDFN denotes objects that are present in the ground truth but have not been detected and

IDFN

associated.

Formulas of IDP and IDR are given below:

IDTP

IDP =

(15)

IDTP+IDFP

IDTP

IDR = (16)

IDTP+IDFN

IDF1 focuses on evaluating the consistency of object identities by measuring how well a tracker

maintains correct ID assignments throughout a sequence. This makes it particularly useful in MOT

applications where identity continuity is crucial (e.g. long-term tracking of pedestrians in autonomous

driving). By emphasizing identity preservation, IDF1 complements other metrics and provides insight

into the association performance of tracking systems.

The computation of IDF1 score can be affected by the following technical characteristics:

— Sensitive to detection accuracy: As are dependent on accurate detection, an improvement in

FN

detection accuracy directly improves the performance of the tracker. Likewise improper detections

also reduces the performance of the system

— Not Ideal for continuous evaluation: The association score is calculated with respect to the entire

inputs sequence, thus it is ill-suited as an “online” metric for continuous evaluation.

NOTE IDF1 is therefore a metric that combines IDP (Identity Precision) and IDR (Identity Recall).

6.15 Inception Score (IS)

Inception Score (IS) is an algorithm computing a distance to assess the generated images quality and

diversity.

The method is based on pre-trained neural network model, often the inception V3 is used, where the

label conditional distribution p y x , where x is the input (image) and y is the label of the image, and

the marginal distribution are compared by using the exponential of the Kulback-Leibler divergence

p x

(ISO/IEC TS 4213:2022 [3], 6.2.7) distance as follows.

Inception Score shall be computed according to Formula (17):

IS = exp E D p y x

...

Questions, Comments and Discussion

Ask us and Technical Secretary will try to provide an answer. You can facilitate discussion about the standard in here.

Loading comments...